fixed conflict vl 0811

commit

17b1312e7c

|

|

@ -131,7 +131,7 @@ pip3 install dist/PPOCRLabel-1.0.2-py2.py3-none-any.whl -i https://mirror.baidu.

|

|||

|

||||

> 注意:如果表格中存在空白单元格,同样需要使用一个标注框将其标出,使得单元格总数与图像中保持一致。

|

||||

|

||||

3. **调整单元格顺序:**点击软件`视图-显示框编号` 打开标注框序号,在软件界面右侧拖动 `识别结果` 一栏下的所有结果,使得标注框编号按照从左到右,从上到下的顺序排列

|

||||

3. **调整单元格顺序**:点击软件`视图-显示框编号` 打开标注框序号,在软件界面右侧拖动 `识别结果` 一栏下的所有结果,使得标注框编号按照从左到右,从上到下的顺序排列,按行依次标注。

|

||||

|

||||

4. 标注表格结构:**在外部Excel软件中,将存在文字的单元格标记为任意标识符(如 `1` )**,保证Excel中的单元格合并情况与原图相同即可(即不需要Excel中的单元格文字与图片中的文字完全相同)

|

||||

|

||||

|

|

|

|||

|

|

@ -1,41 +1,78 @@

|

|||

[English](README_en.md) | 简体中文

|

||||

|

||||

# 场景应用

|

||||

|

||||

PaddleOCR场景应用覆盖通用,制造、金融、交通行业的主要OCR垂类应用,在PP-OCR、PP-Structure的通用能力基础之上,以notebook的形式展示利用场景数据微调、模型优化方法、数据增广等内容,为开发者快速落地OCR应用提供示范与启发。

|

||||

|

||||

> 如需下载全部垂类模型,可以扫描下方二维码,关注公众号填写问卷后,加入PaddleOCR官方交流群获取20G OCR学习大礼包(内含《动手学OCR》电子书、课程回放视频、前沿论文等重磅资料)

|

||||

- [教程文档](#1)

|

||||

- [通用](#11)

|

||||

- [制造](#12)

|

||||

- [金融](#13)

|

||||

- [交通](#14)

|

||||

|

||||

- [模型下载](#2)

|

||||

|

||||

<a name="1"></a>

|

||||

|

||||

## 教程文档

|

||||

|

||||

<a name="11"></a>

|

||||

|

||||

### 通用

|

||||

|

||||

| 类别 | 亮点 | 模型下载 | 教程 |

|

||||

| ---------------------- | ------------ | -------------- | --------------------------------------- |

|

||||

| 高精度中文识别模型SVTR | 比PP-OCRv3识别模型精度高3%,可用于数据挖掘或对预测效率要求不高的场景。| [模型下载](#2) | [中文](./高精度中文识别模型.md)/English |

|

||||

| 手写体识别 | 新增字形支持 | | |

|

||||

|

||||

<a name="12"></a>

|

||||

|

||||

### 制造

|

||||

|

||||

| 类别 | 亮点 | 模型下载 | 教程 | 示例图 |

|

||||

| -------------- | ------------------------------ | -------------- | ------------------------------------------------------------ | ------------------------------------------------------------ |

|

||||

| 数码管识别 | 数码管数据合成、漏识别调优 | [模型下载](#2) | [中文](./光功率计数码管字符识别/光功率计数码管字符识别.md)/English | <img src="https://ai-studio-static-online.cdn.bcebos.com/7d5774a273f84efba5b9ce7fd3f86e9ef24b6473e046444db69fa3ca20ac0986" width = "200" height = "100" /> |

|

||||

| 液晶屏读数识别 | 检测模型蒸馏、Serving部署 | [模型下载](#2) | [中文](./液晶屏读数识别.md)/English | <img src="https://ai-studio-static-online.cdn.bcebos.com/901ab741cb46441ebec510b37e63b9d8d1b7c95f63cc4e5e8757f35179ae6373" width = "200" height = "100" /> |

|

||||

| 包装生产日期 | 点阵字符合成、过曝过暗文字识别 | [模型下载](#2) | [中文](./包装生产日期识别.md)/English | <img src="https://ai-studio-static-online.cdn.bcebos.com/d9e0533cc1df47ffa3bbe99de9e42639a3ebfa5bce834bafb1ca4574bf9db684" width = "200" height = "100" /> |

|

||||

| PCB文字识别 | 小尺寸文本检测与识别 | [模型下载](#2) | [中文](./PCB字符识别/PCB字符识别.md)/English | <img src="https://ai-studio-static-online.cdn.bcebos.com/95d8e95bf1ab476987f2519c0f8f0c60a0cdc2c444804ed6ab08f2f7ab054880" width = "200" height = "100" /> |

|

||||

| 电表识别 | 大分辨率图像检测调优 | [模型下载](#2) | | |

|

||||

| 液晶屏缺陷检测 | 非文字字符识别 | | | |

|

||||

|

||||

<a name="13"></a>

|

||||

|

||||

### 金融

|

||||

|

||||

| 类别 | 亮点 | 模型下载 | 教程 | 示例图 |

|

||||

| -------------- | ------------------------ | -------------- | ----------------------------------- | ------------------------------------------------------------ |

|

||||

| 表单VQA | 多模态通用表单结构化提取 | [模型下载](#2) | [中文](./多模态表单识别.md)/English | <img src="https://ai-studio-static-online.cdn.bcebos.com/a3b25766f3074d2facdf88d4a60fc76612f51992fd124cf5bd846b213130665b" width = "200" height = "200" /> |

|

||||

| 增值税发票 | 尽请期待 | | | |

|

||||

| 印章检测与识别 | 端到端弯曲文本识别 | | | |

|

||||

| 通用卡证识别 | 通用结构化提取 | | | |

|

||||

| 身份证识别 | 结构化提取、图像阴影 | | | |

|

||||

| 合同比对 | 密集文本检测、NLP串联 | | | |

|

||||

|

||||

<a name="14"></a>

|

||||

|

||||

### 交通

|

||||

|

||||

| 类别 | 亮点 | 模型下载 | 教程 | 示例图 |

|

||||

| ----------------- | ------------------------------ | -------------- | ----------------------------------- | ------------------------------------------------------------ |

|

||||

| 车牌识别 | 多角度图像、轻量模型、端侧部署 | [模型下载](#2) | [中文](./轻量级车牌识别.md)/English | <img src="https://ai-studio-static-online.cdn.bcebos.com/76b6a0939c2c4cf49039b6563c4b28e241e11285d7464e799e81c58c0f7707a7" width = "200" height = "100" /> |

|

||||

| 驾驶证/行驶证识别 | 尽请期待 | | | |

|

||||

| 快递单识别 | 尽请期待 | | | |

|

||||

|

||||

<a name="2"></a>

|

||||

|

||||

## 模型下载

|

||||

|

||||

如需下载上述场景中已经训练好的垂类模型,可以扫描下方二维码,关注公众号填写问卷后,加入PaddleOCR官方交流群获取20G OCR学习大礼包(内含《动手学OCR》电子书、课程回放视频、前沿论文等重磅资料)

|

||||

|

||||

<div align="center">

|

||||

<img src="https://ai-studio-static-online.cdn.bcebos.com/dd721099bd50478f9d5fb13d8dd00fad69c22d6848244fd3a1d3980d7fefc63e" width = "150" height = "150" />

|

||||

</div>

|

||||

|

||||

如果您是企业开发者且未在上述场景中找到合适的方案,可以填写[OCR应用合作调研问卷](https://paddle.wjx.cn/vj/QwF7GKw.aspx),免费与官方团队展开不同层次的合作,包括但不限于问题抽象、确定技术方案、项目答疑、共同研发等。如果您已经使用PaddleOCR落地项目,也可以填写此问卷,与飞桨平台共同宣传推广,提升企业技术品宣。期待您的提交!

|

||||

|

||||

> 如果您是企业开发者且未在下述场景中找到合适的方案,可以填写[OCR应用合作调研问卷](https://paddle.wjx.cn/vj/QwF7GKw.aspx),免费与官方团队展开不同层次的合作,包括但不限于问题抽象、确定技术方案、项目答疑、共同研发等。如果您已经使用PaddleOCR落地项目,也可以填写此问卷,与飞桨平台共同宣传推广,提升企业技术品宣。期待您的提交!

|

||||

|

||||

## 通用

|

||||

|

||||

| 类别 | 亮点 | 类别 | 亮点 |

|

||||

| ------------------------------------------------- | -------- | ---------- | ------------ |

|

||||

| [高精度中文识别模型SVTR](./高精度中文识别模型.md) | 新增模型 | 手写体识别 | 新增字形支持 |

|

||||

|

||||

## 制造

|

||||

|

||||

| 类别 | 亮点 | 类别 | 亮点 |

|

||||

| ------------------------------------------------------------ | ------------------------------ | ------------------------------------------- | -------------------- |

|

||||

| [数码管识别](./光功率计数码管字符识别/光功率计数码管字符识别.md) | 数码管数据合成、漏识别调优 | 电表识别 | 大分辨率图像检测调优 |

|

||||

| [液晶屏读数识别](./液晶屏读数识别.md) | 检测模型蒸馏、Serving部署 | [PCB文字识别](./PCB字符识别/PCB字符识别.md) | 小尺寸文本检测与识别 |

|

||||

| [包装生产日期](./包装生产日期识别.md) | 点阵字符合成、过曝过暗文字识别 | 液晶屏缺陷检测 | 非文字字符识别 |

|

||||

|

||||

## 金融

|

||||

|

||||

| 类别 | 亮点 | 类别 | 亮点 |

|

||||

| ------------------------------ | ------------------------ | ------------ | --------------------- |

|

||||

| [表单VQA](./多模态表单识别.md) | 多模态通用表单结构化提取 | 通用卡证识别 | 通用结构化提取 |

|

||||

| 增值税发票 | 尽请期待 | 身份证识别 | 结构化提取、图像阴影 |

|

||||

| 印章检测与识别 | 端到端弯曲文本识别 | 合同比对 | 密集文本检测、NLP串联 |

|

||||

|

||||

## 交通

|

||||

|

||||

| 类别 | 亮点 | 类别 | 亮点 |

|

||||

| ------------------------------- | ------------------------------ | ---------- | -------- |

|

||||

| [车牌识别](./轻量级车牌识别.md) | 多角度图像、轻量模型、端侧部署 | 快递单识别 | 尽请期待 |

|

||||

| 驾驶证/行驶证识别 | 尽请期待 | | |

|

||||

<a href="https://trackgit.com">

|

||||

<img src="https://us-central1-trackgit-analytics.cloudfunctions.net/token/ping/l63cvzo0w09yxypc7ygl" alt="traffic" />

|

||||

</a>

|

||||

|

|

|

|||

|

|

@ -0,0 +1,251 @@

|

|||

# 基于PP-OCRv3的手写文字识别

|

||||

|

||||

- [1. 项目背景及意义](#1-项目背景及意义)

|

||||

- [2. 项目内容](#2-项目内容)

|

||||

- [3. PP-OCRv3识别算法介绍](#3-PP-OCRv3识别算法介绍)

|

||||

- [4. 安装环境](#4-安装环境)

|

||||

- [5. 数据准备](#5-数据准备)

|

||||

- [6. 模型训练](#6-模型训练)

|

||||

- [6.1 下载预训练模型](#61-下载预训练模型)

|

||||

- [6.2 修改配置文件](#62-修改配置文件)

|

||||

- [6.3 开始训练](#63-开始训练)

|

||||

- [7. 模型评估](#7-模型评估)

|

||||

- [8. 模型导出推理](#8-模型导出推理)

|

||||

- [8.1 模型导出](#81-模型导出)

|

||||

- [8.2 模型推理](#82-模型推理)

|

||||

|

||||

|

||||

## 1. 项目背景及意义

|

||||

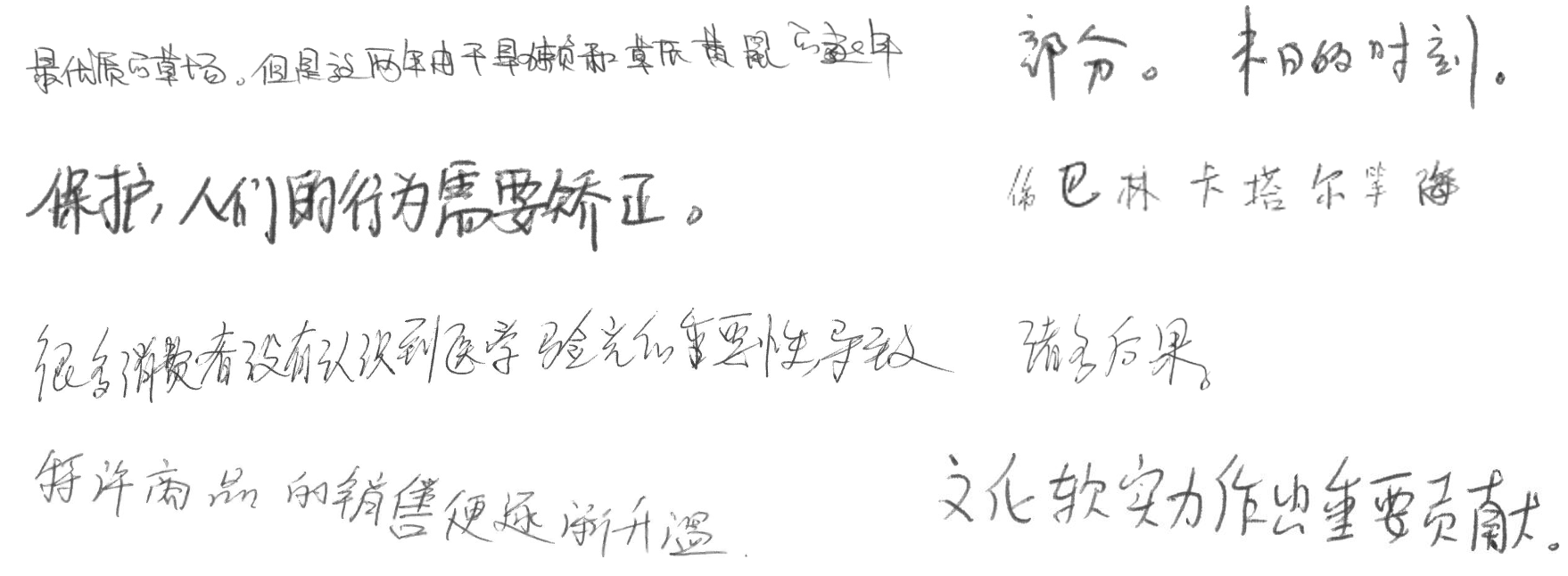

目前光学字符识别(OCR)技术在我们的生活当中被广泛使用,但是大多数模型在通用场景下的准确性还有待提高。针对于此我们借助飞桨提供的PaddleOCR套件较容易的实现了在垂类场景下的应用。手写体在日常生活中较为常见,然而手写体的识别却存在着很大的挑战,因为每个人的手写字体风格不一样,这对于视觉模型来说还是相当有挑战的。因此训练一个手写体识别模型具有很好的现实意义。下面给出一些手写体的示例图:

|

||||

|

||||

|

||||

|

||||

## 2. 项目内容

|

||||

本项目基于PaddleOCR套件,以PP-OCRv3识别模型为基础,针对手写文字识别场景进行优化。

|

||||

|

||||

Aistudio项目链接:[OCR手写文字识别](https://aistudio.baidu.com/aistudio/projectdetail/4330587)

|

||||

|

||||

## 3. PP-OCRv3识别算法介绍

|

||||

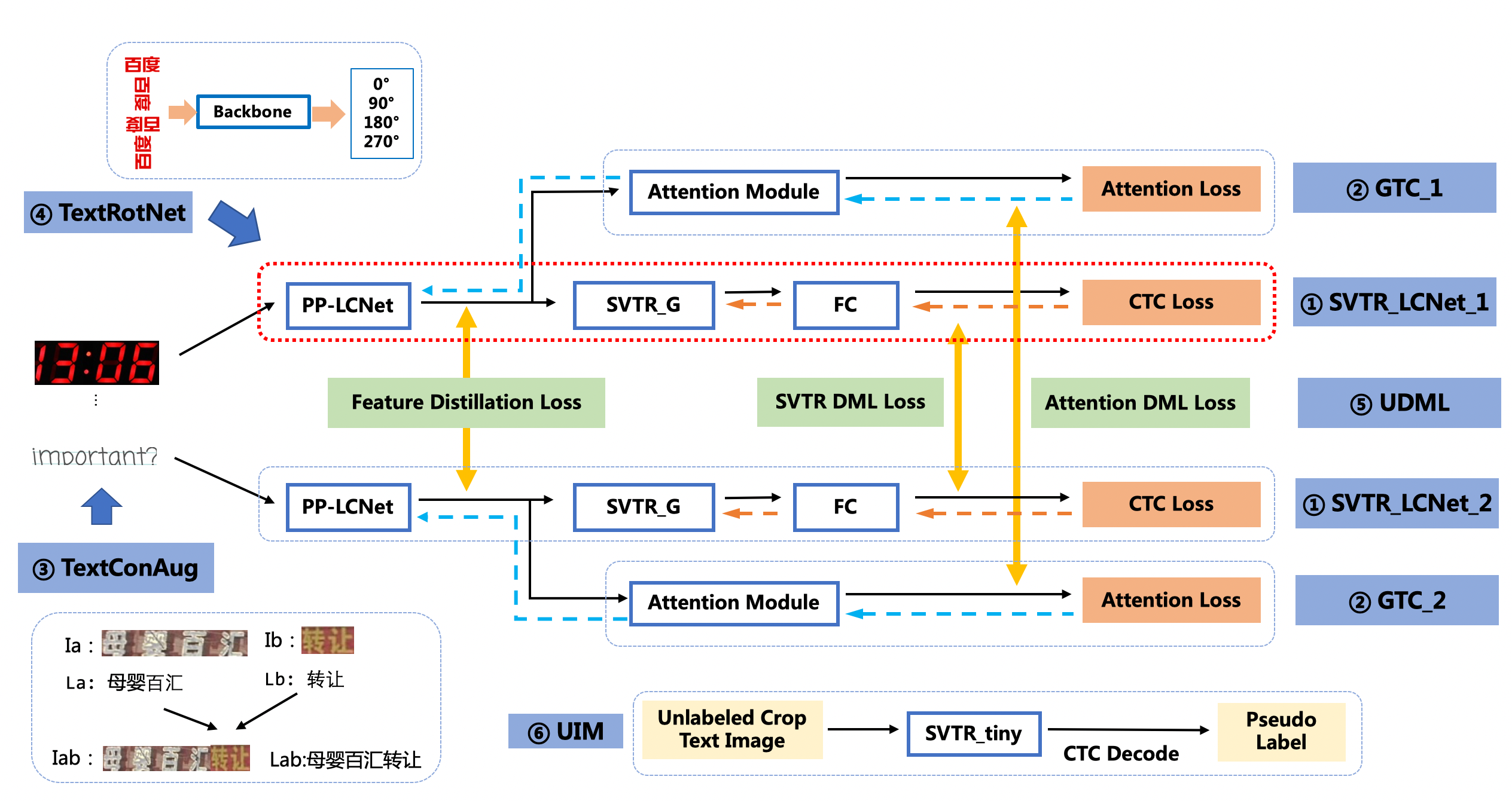

PP-OCRv3的识别模块是基于文本识别算法[SVTR](https://arxiv.org/abs/2205.00159)优化。SVTR不再采用RNN结构,通过引入Transformers结构更加有效地挖掘文本行图像的上下文信息,从而提升文本识别能力。如下图所示,PP-OCRv3采用了6个优化策略。

|

||||

|

||||

|

||||

|

||||

优化策略汇总如下:

|

||||

|

||||

* SVTR_LCNet:轻量级文本识别网络

|

||||

* GTC:Attention指导CTC训练策略

|

||||

* TextConAug:挖掘文字上下文信息的数据增广策略

|

||||

* TextRotNet:自监督的预训练模型

|

||||

* UDML:联合互学习策略

|

||||

* UIM:无标注数据挖掘方案

|

||||

|

||||

详细优化策略描述请参考[PP-OCRv3优化策略](https://github.com/PaddlePaddle/PaddleOCR/blob/release/2.5/doc/doc_ch/PP-OCRv3_introduction.md#3-%E8%AF%86%E5%88%AB%E4%BC%98%E5%8C%96)

|

||||

|

||||

## 4. 安装环境

|

||||

|

||||

|

||||

```python

|

||||

# 首先git官方的PaddleOCR项目,安装需要的依赖

|

||||

git clone https://github.com/PaddlePaddle/PaddleOCR.git

|

||||

cd PaddleOCR

|

||||

pip install -r requirements.txt

|

||||

```

|

||||

|

||||

## 5. 数据准备

|

||||

本项目使用公开的手写文本识别数据集,包含Chinese OCR, 中科院自动化研究所-手写中文数据集[CASIA-HWDB2.x](http://www.nlpr.ia.ac.cn/databases/handwriting/Download.html),以及由中科院手写数据和网上开源数据合并组合的[数据集](https://aistudio.baidu.com/aistudio/datasetdetail/102884/0)等,该项目已经挂载处理好的数据集,可直接下载使用进行训练。

|

||||

|

||||

|

||||

```python

|

||||

下载并解压数据

|

||||

tar -xf hw_data.tar

|

||||

```

|

||||

|

||||

## 6. 模型训练

|

||||

### 6.1 下载预训练模型

|

||||

首先需要下载我们需要的PP-OCRv3识别预训练模型,更多选择请自行选择其他的[文字识别模型](https://github.com/PaddlePaddle/PaddleOCR/blob/release/2.5/doc/doc_ch/models_list.md#2-%E6%96%87%E6%9C%AC%E8%AF%86%E5%88%AB%E6%A8%A1%E5%9E%8B)

|

||||

|

||||

|

||||

```python

|

||||

# 使用该指令下载需要的预训练模型

|

||||

wget -P ./pretrained_models/ https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_rec_train.tar

|

||||

# 解压预训练模型文件

|

||||

tar -xf ./pretrained_models/ch_PP-OCRv3_rec_train.tar -C pretrained_models

|

||||

```

|

||||

|

||||

### 6.2 修改配置文件

|

||||

我们使用`configs/rec/PP-OCRv3/ch_PP-OCRv3_rec_distillation.yml`,主要修改训练轮数和学习率参相关参数,设置预训练模型路径,设置数据集路径。 另外,batch_size可根据自己机器显存大小进行调整。 具体修改如下几个地方:

|

||||

|

||||

```

|

||||

epoch_num: 100 # 训练epoch数

|

||||

save_model_dir: ./output/ch_PP-OCR_v3_rec

|

||||

save_epoch_step: 10

|

||||

eval_batch_step: [0, 100] # 评估间隔,每隔100step评估一次

|

||||

pretrained_model: ./pretrained_models/ch_PP-OCRv3_rec_train/best_accuracy # 预训练模型路径

|

||||

|

||||

|

||||

lr:

|

||||

name: Cosine # 修改学习率衰减策略为Cosine

|

||||

learning_rate: 0.0001 # 修改fine-tune的学习率

|

||||

warmup_epoch: 2 # 修改warmup轮数

|

||||

|

||||

Train:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: ./train_data # 训练集图片路径

|

||||

ext_op_transform_idx: 1

|

||||

label_file_list:

|

||||

- ./train_data/chineseocr-data/rec_hand_line_all_label_train.txt # 训练集标签

|

||||

- ./train_data/handwrite/HWDB2.0Train_label.txt

|

||||

- ./train_data/handwrite/HWDB2.1Train_label.txt

|

||||

- ./train_data/handwrite/HWDB2.2Train_label.txt

|

||||

- ./train_data/handwrite/hwdb_ic13/handwriting_hwdb_train_labels.txt

|

||||

- ./train_data/handwrite/HW_Chinese/train_hw.txt

|

||||

ratio_list:

|

||||

- 0.1

|

||||

- 1.0

|

||||

- 1.0

|

||||

- 1.0

|

||||

- 0.02

|

||||

- 1.0

|

||||

loader:

|

||||

shuffle: true

|

||||

batch_size_per_card: 64

|

||||

drop_last: true

|

||||

num_workers: 4

|

||||

Eval:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: ./train_data # 测试集图片路径

|

||||

label_file_list:

|

||||

- ./train_data/chineseocr-data/rec_hand_line_all_label_val.txt # 测试集标签

|

||||

- ./train_data/handwrite/HWDB2.0Test_label.txt

|

||||

- ./train_data/handwrite/HWDB2.1Test_label.txt

|

||||

- ./train_data/handwrite/HWDB2.2Test_label.txt

|

||||

- ./train_data/handwrite/hwdb_ic13/handwriting_hwdb_val_labels.txt

|

||||

- ./train_data/handwrite/HW_Chinese/test_hw.txt

|

||||

loader:

|

||||

shuffle: false

|

||||

drop_last: false

|

||||

batch_size_per_card: 64

|

||||

num_workers: 4

|

||||

```

|

||||

由于数据集大多是长文本,因此需要**注释**掉下面的数据增广策略,以便训练出更好的模型。

|

||||

```

|

||||

- RecConAug:

|

||||

prob: 0.5

|

||||

ext_data_num: 2

|

||||

image_shape: [48, 320, 3]

|

||||

```

|

||||

|

||||

|

||||

### 6.3 开始训练

|

||||

我们使用上面修改好的配置文件`configs/rec/PP-OCRv3/ch_PP-OCRv3_rec_distillation.yml`,预训练模型,数据集路径,学习率,训练轮数等都已经设置完毕后,可以使用下面命令开始训练。

|

||||

|

||||

|

||||

```python

|

||||

# 开始训练识别模型

|

||||

python tools/train.py -c configs/rec/PP-OCRv3/ch_PP-OCRv3_rec_distillation.yml

|

||||

|

||||

```

|

||||

|

||||

## 7. 模型评估

|

||||

在训练之前,我们可以直接使用下面命令来评估预训练模型的效果:

|

||||

|

||||

|

||||

|

||||

```python

|

||||

# 评估预训练模型

|

||||

python tools/eval.py -c configs/rec/PP-OCRv3/ch_PP-OCRv3_rec_distillation.yml -o Global.pretrained_model="./pretrained_models/ch_PP-OCRv3_rec_train/best_accuracy"

|

||||

```

|

||||

```

|

||||

[2022/07/14 10:46:22] ppocr INFO: load pretrain successful from ./pretrained_models/ch_PP-OCRv3_rec_train/best_accuracy

|

||||

eval model:: 100%|████████████████████████████| 687/687 [03:29<00:00, 3.27it/s]

|

||||

[2022/07/14 10:49:52] ppocr INFO: metric eval ***************

|

||||

[2022/07/14 10:49:52] ppocr INFO: acc:0.03724954461811258

|

||||

[2022/07/14 10:49:52] ppocr INFO: norm_edit_dis:0.4859541065843199

|

||||

[2022/07/14 10:49:52] ppocr INFO: Teacher_acc:0.0371584699368947

|

||||

[2022/07/14 10:49:52] ppocr INFO: Teacher_norm_edit_dis:0.48718814890536477

|

||||

[2022/07/14 10:49:52] ppocr INFO: fps:947.8562684823883

|

||||

```

|

||||

|

||||

可以看出,直接加载预训练模型进行评估,效果较差,因为预训练模型并不是基于手写文字进行单独训练的,所以我们需要基于预训练模型进行finetune。

|

||||

训练完成后,可以进行测试评估,评估命令如下:

|

||||

|

||||

|

||||

|

||||

```python

|

||||

# 评估finetune效果

|

||||

python tools/eval.py -c configs/rec/PP-OCRv3/ch_PP-OCRv3_rec_distillation.yml -o Global.pretrained_model="./output/ch_PP-OCR_v3_rec/best_accuracy"

|

||||

|

||||

```

|

||||

|

||||

评估结果如下,可以看出识别准确率为54.3%。

|

||||

```

|

||||

[2022/07/14 10:54:06] ppocr INFO: metric eval ***************

|

||||

[2022/07/14 10:54:06] ppocr INFO: acc:0.5430100180913

|

||||

[2022/07/14 10:54:06] ppocr INFO: norm_edit_dis:0.9203322593158589

|

||||

[2022/07/14 10:54:06] ppocr INFO: Teacher_acc:0.5401183969626324

|

||||

[2022/07/14 10:54:06] ppocr INFO: Teacher_norm_edit_dis:0.919827504507755

|

||||

[2022/07/14 10:54:06] ppocr INFO: fps:928.948733797251

|

||||

```

|

||||

|

||||

如需获取已训练模型,请扫码填写问卷,加入PaddleOCR官方交流群获取全部OCR垂类模型下载链接、《动手学OCR》电子书等全套OCR学习资料🎁

|

||||

<div align="left">

|

||||

<img src="https://ai-studio-static-online.cdn.bcebos.com/dd721099bd50478f9d5fb13d8dd00fad69c22d6848244fd3a1d3980d7fefc63e" width = "150" height = "150" />

|

||||

</div>

|

||||

将下载或训练完成的模型放置在对应目录下即可完成模型推理。

|

||||

|

||||

## 8. 模型导出推理

|

||||

训练完成后,可以将训练模型转换成inference模型。inference 模型会额外保存模型的结构信息,在预测部署、加速推理上性能优越,灵活方便,适合于实际系统集成。

|

||||

|

||||

|

||||

### 8.1 模型导出

|

||||

导出命令如下:

|

||||

|

||||

|

||||

|

||||

```python

|

||||

# 转化为推理模型

|

||||

python tools/export_model.py -c configs/rec/PP-OCRv3/ch_PP-OCRv3_rec_distillation.yml -o Global.pretrained_model="./output/ch_PP-OCR_v3_rec/best_accuracy" Global.save_inference_dir="./inference/rec_ppocrv3/"

|

||||

|

||||

```

|

||||

|

||||

### 8.2 模型推理

|

||||

导出模型后,可以使用如下命令进行推理预测:

|

||||

|

||||

|

||||

|

||||

```python

|

||||

# 推理预测

|

||||

python tools/infer/predict_rec.py --image_dir="train_data/handwrite/HWDB2.0Test_images/104-P16_4.jpg" --rec_model_dir="./inference/rec_ppocrv3/Student"

|

||||

```

|

||||

|

||||

```

|

||||

[2022/07/14 10:55:56] ppocr INFO: In PP-OCRv3, rec_image_shape parameter defaults to '3, 48, 320', if you are using recognition model with PP-OCRv2 or an older version, please set --rec_image_shape='3,32,320

|

||||

[2022/07/14 10:55:58] ppocr INFO: Predicts of train_data/handwrite/HWDB2.0Test_images/104-P16_4.jpg:('品结构,差异化的多品牌渗透使欧莱雅确立了其在中国化妆', 0.9904912114143372)

|

||||

```

|

||||

|

||||

|

||||

```python

|

||||

# 可视化文字识别图片

|

||||

from PIL import Image

|

||||

import matplotlib.pyplot as plt

|

||||

import numpy as np

|

||||

import os

|

||||

|

||||

|

||||

img_path = 'train_data/handwrite/HWDB2.0Test_images/104-P16_4.jpg'

|

||||

|

||||

def vis(img_path):

|

||||

plt.figure()

|

||||

image = Image.open(img_path)

|

||||

plt.imshow(image)

|

||||

plt.show()

|

||||

# image = image.resize([208, 208])

|

||||

|

||||

|

||||

vis(img_path)

|

||||

```

|

||||

|

||||

|

||||

|

||||

|

|

@ -2,7 +2,7 @@

|

|||

|

||||

## 1. 简介

|

||||

|

||||

PP-OCRv3是百度开源的超轻量级场景文本检测识别模型库,其中超轻量的场景中文识别模型SVTR_LCNet使用了SVTR算法结构。为了保证速度,SVTR_LCNet将SVTR模型的Local Blocks替换为LCNet,使用两层Global Blocks。在中文场景中,PP-OCRv3识别主要使用如下优化策略:

|

||||

PP-OCRv3是百度开源的超轻量级场景文本检测识别模型库,其中超轻量的场景中文识别模型SVTR_LCNet使用了SVTR算法结构。为了保证速度,SVTR_LCNet将SVTR模型的Local Blocks替换为LCNet,使用两层Global Blocks。在中文场景中,PP-OCRv3识别主要使用如下优化策略([详细技术报告](../doc/doc_ch/PP-OCRv3_introduction.md)):

|

||||

- GTC:Attention指导CTC训练策略;

|

||||

- TextConAug:挖掘文字上下文信息的数据增广策略;

|

||||

- TextRotNet:自监督的预训练模型;

|

||||

|

|

|

|||

|

|

@ -6,11 +6,11 @@ Global:

|

|||

save_model_dir: ./output/re_layoutlmv2_xfund_zh

|

||||

save_epoch_step: 2000

|

||||

# evaluation is run every 10 iterations after the 0th iteration

|

||||

eval_batch_step: [ 0, 57 ]

|

||||

eval_batch_step: [ 0, 19 ]

|

||||

cal_metric_during_train: False

|

||||

save_inference_dir:

|

||||

use_visualdl: False

|

||||

seed: 2048

|

||||

seed: 2022

|

||||

infer_img: ppstructure/docs/vqa/input/zh_val_21.jpg

|

||||

save_res_path: ./output/re_layoutlmv2_xfund_zh/res/

|

||||

|

||||

|

|

@ -1,9 +1,9 @@

|

|||

Global:

|

||||

use_gpu: True

|

||||

epoch_num: &epoch_num 200

|

||||

epoch_num: &epoch_num 130

|

||||

log_smooth_window: 10

|

||||

print_batch_step: 10

|

||||

save_model_dir: ./output/re_layoutxlm/

|

||||

save_model_dir: ./output/re_layoutxlm_xfund_zh

|

||||

save_epoch_step: 2000

|

||||

# evaluation is run every 10 iterations after the 0th iteration

|

||||

eval_batch_step: [ 0, 19 ]

|

||||

|

|

@ -12,7 +12,7 @@ Global:

|

|||

use_visualdl: False

|

||||

seed: 2022

|

||||

infer_img: ppstructure/docs/vqa/input/zh_val_21.jpg

|

||||

save_res_path: ./output/re/

|

||||

save_res_path: ./output/re_layoutxlm_xfund_zh/res/

|

||||

|

||||

Architecture:

|

||||

model_type: vqa

|

||||

|

|

@ -81,7 +81,7 @@ Train:

|

|||

loader:

|

||||

shuffle: True

|

||||

drop_last: False

|

||||

batch_size_per_card: 8

|

||||

batch_size_per_card: 2

|

||||

num_workers: 8

|

||||

collate_fn: ListCollator

|

||||

|

||||

|

|

@ -6,13 +6,13 @@ Global:

|

|||

save_model_dir: ./output/ser_layoutlm_xfund_zh

|

||||

save_epoch_step: 2000

|

||||

# evaluation is run every 10 iterations after the 0th iteration

|

||||

eval_batch_step: [ 0, 57 ]

|

||||

eval_batch_step: [ 0, 19 ]

|

||||

cal_metric_during_train: False

|

||||

save_inference_dir:

|

||||

use_visualdl: False

|

||||

seed: 2022

|

||||

infer_img: ppstructure/docs/vqa/input/zh_val_42.jpg

|

||||

save_res_path: ./output/ser_layoutlm_xfund_zh/res/

|

||||

save_res_path: ./output/re_layoutlm_xfund_zh/res

|

||||

|

||||

Architecture:

|

||||

model_type: vqa

|

||||

|

|

@ -55,6 +55,7 @@ Train:

|

|||

data_dir: train_data/XFUND/zh_train/image

|

||||

label_file_list:

|

||||

- train_data/XFUND/zh_train/train.json

|

||||

ratio_list: [ 1.0 ]

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

|

|

@ -27,6 +27,7 @@ Architecture:

|

|||

Loss:

|

||||

name: VQASerTokenLayoutLMLoss

|

||||

num_classes: *num_classes

|

||||

key: "backbone_out"

|

||||

|

||||

Optimizer:

|

||||

name: AdamW

|

||||

|

|

@ -27,6 +27,7 @@ Architecture:

|

|||

Loss:

|

||||

name: VQASerTokenLayoutLMLoss

|

||||

num_classes: *num_classes

|

||||

key: "backbone_out"

|

||||

|

||||

Optimizer:

|

||||

name: AdamW

|

||||

|

|

@ -1,18 +1,18 @@

|

|||

Global:

|

||||

use_gpu: True

|

||||

epoch_num: &epoch_num 200

|

||||

epoch_num: &epoch_num 130

|

||||

log_smooth_window: 10

|

||||

print_batch_step: 10

|

||||

save_model_dir: ./output/re_layoutxlm_funsd

|

||||

save_model_dir: ./output/re_vi_layoutxlm_xfund_zh

|

||||

save_epoch_step: 2000

|

||||

# evaluation is run every 10 iterations after the 0th iteration

|

||||

eval_batch_step: [ 0, 57 ]

|

||||

eval_batch_step: [ 0, 19 ]

|

||||

cal_metric_during_train: False

|

||||

save_inference_dir:

|

||||

use_visualdl: False

|

||||

seed: 2022

|

||||

infer_img: train_data/FUNSD/testing_data/images/83624198.png

|

||||

save_res_path: ./output/re_layoutxlm_funsd/res/

|

||||

infer_img: ppstructure/docs/vqa/input/zh_val_21.jpg

|

||||

save_res_path: ./output/re/xfund_zh/with_gt

|

||||

|

||||

Architecture:

|

||||

model_type: vqa

|

||||

|

|

@ -21,6 +21,7 @@ Architecture:

|

|||

Backbone:

|

||||

name: LayoutXLMForRe

|

||||

pretrained: True

|

||||

mode: vi

|

||||

checkpoints:

|

||||

|

||||

Loss:

|

||||

|

|

@ -50,10 +51,9 @@ Metric:

|

|||

Train:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: ./train_data/FUNSD/training_data/images/

|

||||

data_dir: train_data/XFUND/zh_train/image

|

||||

label_file_list:

|

||||

- ./train_data/FUNSD/train_v4.json

|

||||

# - ./train_data/FUNSD/train.json

|

||||

- train_data/XFUND/zh_train/train.json

|

||||

ratio_list: [ 1.0 ]

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

|

|

@ -62,8 +62,9 @@ Train:

|

|||

- VQATokenLabelEncode: # Class handling label

|

||||

contains_re: True

|

||||

algorithm: *algorithm

|

||||

class_path: &class_path ./train_data/FUNSD/class_list.txt

|

||||

class_path: &class_path train_data/XFUND/class_list_xfun.txt

|

||||

use_textline_bbox_info: &use_textline_bbox_info True

|

||||

order_method: &order_method "tb-yx"

|

||||

- VQATokenPad:

|

||||

max_seq_len: &max_seq_len 512

|

||||

return_attention_mask: True

|

||||

|

|

@ -79,22 +80,20 @@ Train:

|

|||

order: 'hwc'

|

||||

- ToCHWImage:

|

||||

- KeepKeys:

|

||||

# dataloader will return list in this order

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'entities', 'relations']

|

||||

keep_keys: [ 'input_ids', 'bbox','attention_mask', 'token_type_ids', 'image', 'entities', 'relations'] # dataloader will return list in this order

|

||||

loader:

|

||||

shuffle: False

|

||||

shuffle: True

|

||||

drop_last: False

|

||||

batch_size_per_card: 8

|

||||

num_workers: 16

|

||||

batch_size_per_card: 2

|

||||

num_workers: 4

|

||||

collate_fn: ListCollator

|

||||

|

||||

Eval:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: ./train_data/FUNSD/testing_data/images/

|

||||

label_file_list:

|

||||

- ./train_data/FUNSD/test_v4.json

|

||||

# - ./train_data/FUNSD/test.json

|

||||

data_dir: train_data/XFUND/zh_val/image

|

||||

label_file_list:

|

||||

- train_data/XFUND/zh_val/val.json

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

|

|

@ -104,6 +103,7 @@ Eval:

|

|||

algorithm: *algorithm

|

||||

class_path: *class_path

|

||||

use_textline_bbox_info: *use_textline_bbox_info

|

||||

order_method: *order_method

|

||||

- VQATokenPad:

|

||||

max_seq_len: *max_seq_len

|

||||

return_attention_mask: True

|

||||

|

|

@ -119,11 +119,11 @@ Eval:

|

|||

order: 'hwc'

|

||||

- ToCHWImage:

|

||||

- KeepKeys:

|

||||

# dataloader will return list in this order

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'entities', 'relations']

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'entities', 'relations'] # dataloader will return list in this order

|

||||

loader:

|

||||

shuffle: False

|

||||

drop_last: False

|

||||

batch_size_per_card: 8

|

||||

num_workers: 8

|

||||

collate_fn: ListCollator

|

||||

|

||||

|

|

@ -0,0 +1,175 @@

|

|||

Global:

|

||||

use_gpu: True

|

||||

epoch_num: &epoch_num 130

|

||||

log_smooth_window: 10

|

||||

print_batch_step: 10

|

||||

save_model_dir: ./output/re_vi_layoutxlm_xfund_zh_udml

|

||||

save_epoch_step: 2000

|

||||

# evaluation is run every 10 iterations after the 0th iteration

|

||||

eval_batch_step: [ 0, 19 ]

|

||||

cal_metric_during_train: False

|

||||

save_inference_dir:

|

||||

use_visualdl: False

|

||||

seed: 2022

|

||||

infer_img: ppstructure/docs/vqa/input/zh_val_21.jpg

|

||||

save_res_path: ./output/re/xfund_zh/with_gt

|

||||

|

||||

Architecture:

|

||||

model_type: &model_type "vqa"

|

||||

name: DistillationModel

|

||||

algorithm: Distillation

|

||||

Models:

|

||||

Teacher:

|

||||

pretrained:

|

||||

freeze_params: false

|

||||

return_all_feats: true

|

||||

model_type: *model_type

|

||||

algorithm: &algorithm "LayoutXLM"

|

||||

Transform:

|

||||

Backbone:

|

||||

name: LayoutXLMForRe

|

||||

pretrained: True

|

||||

mode: vi

|

||||

checkpoints:

|

||||

Student:

|

||||

pretrained:

|

||||

freeze_params: false

|

||||

return_all_feats: true

|

||||

model_type: *model_type

|

||||

algorithm: *algorithm

|

||||

Transform:

|

||||

Backbone:

|

||||

name: LayoutXLMForRe

|

||||

pretrained: True

|

||||

mode: vi

|

||||

checkpoints:

|

||||

|

||||

Loss:

|

||||

name: CombinedLoss

|

||||

loss_config_list:

|

||||

- DistillationLossFromOutput:

|

||||

weight: 1.0

|

||||

model_name_list: ["Student", "Teacher"]

|

||||

key: loss

|

||||

reduction: mean

|

||||

- DistillationVQADistanceLoss:

|

||||

weight: 0.5

|

||||

mode: "l2"

|

||||

model_name_pairs:

|

||||

- ["Student", "Teacher"]

|

||||

key: hidden_states_5

|

||||

name: "loss_5"

|

||||

- DistillationVQADistanceLoss:

|

||||

weight: 0.5

|

||||

mode: "l2"

|

||||

model_name_pairs:

|

||||

- ["Student", "Teacher"]

|

||||

key: hidden_states_8

|

||||

name: "loss_8"

|

||||

|

||||

|

||||

Optimizer:

|

||||

name: AdamW

|

||||

beta1: 0.9

|

||||

beta2: 0.999

|

||||

clip_norm: 10

|

||||

lr:

|

||||

learning_rate: 0.00005

|

||||

warmup_epoch: 10

|

||||

regularizer:

|

||||

name: L2

|

||||

factor: 0.00000

|

||||

|

||||

PostProcess:

|

||||

name: DistillationRePostProcess

|

||||

model_name: ["Student", "Teacher"]

|

||||

key: null

|

||||

|

||||

|

||||

Metric:

|

||||

name: DistillationMetric

|

||||

base_metric_name: VQAReTokenMetric

|

||||

main_indicator: hmean

|

||||

key: "Student"

|

||||

|

||||

Train:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: train_data/XFUND/zh_train/image

|

||||

label_file_list:

|

||||

- train_data/XFUND/zh_train/train.json

|

||||

ratio_list: [ 1.0 ]

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

channel_first: False

|

||||

- VQATokenLabelEncode: # Class handling label

|

||||

contains_re: True

|

||||

algorithm: *algorithm

|

||||

class_path: &class_path train_data/XFUND/class_list_xfun.txt

|

||||

use_textline_bbox_info: &use_textline_bbox_info True

|

||||

# [None, "tb-yx"]

|

||||

order_method: &order_method "tb-yx"

|

||||

- VQATokenPad:

|

||||

max_seq_len: &max_seq_len 512

|

||||

return_attention_mask: True

|

||||

- VQAReTokenRelation:

|

||||

- VQAReTokenChunk:

|

||||

max_seq_len: *max_seq_len

|

||||

- Resize:

|

||||

size: [224,224]

|

||||

- NormalizeImage:

|

||||

scale: 1

|

||||

mean: [ 123.675, 116.28, 103.53 ]

|

||||

std: [ 58.395, 57.12, 57.375 ]

|

||||

order: 'hwc'

|

||||

- ToCHWImage:

|

||||

- KeepKeys:

|

||||

keep_keys: [ 'input_ids', 'bbox','attention_mask', 'token_type_ids', 'image', 'entities', 'relations'] # dataloader will return list in this order

|

||||

loader:

|

||||

shuffle: True

|

||||

drop_last: False

|

||||

batch_size_per_card: 2

|

||||

num_workers: 4

|

||||

collate_fn: ListCollator

|

||||

|

||||

Eval:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: train_data/XFUND/zh_val/image

|

||||

label_file_list:

|

||||

- train_data/XFUND/zh_val/val.json

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

channel_first: False

|

||||

- VQATokenLabelEncode: # Class handling label

|

||||

contains_re: True

|

||||

algorithm: *algorithm

|

||||

class_path: *class_path

|

||||

use_textline_bbox_info: *use_textline_bbox_info

|

||||

order_method: *order_method

|

||||

- VQATokenPad:

|

||||

max_seq_len: *max_seq_len

|

||||

return_attention_mask: True

|

||||

- VQAReTokenRelation:

|

||||

- VQAReTokenChunk:

|

||||

max_seq_len: *max_seq_len

|

||||

- Resize:

|

||||

size: [224,224]

|

||||

- NormalizeImage:

|

||||

scale: 1

|

||||

mean: [ 123.675, 116.28, 103.53 ]

|

||||

std: [ 58.395, 57.12, 57.375 ]

|

||||

order: 'hwc'

|

||||

- ToCHWImage:

|

||||

- KeepKeys:

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'entities', 'relations'] # dataloader will return list in this order

|

||||

loader:

|

||||

shuffle: False

|

||||

drop_last: False

|

||||

batch_size_per_card: 8

|

||||

num_workers: 8

|

||||

collate_fn: ListCollator

|

||||

|

||||

|

||||

|

|

@ -3,30 +3,38 @@ Global:

|

|||

epoch_num: &epoch_num 200

|

||||

log_smooth_window: 10

|

||||

print_batch_step: 10

|

||||

save_model_dir: ./output/ser_layoutlm_funsd

|

||||

save_model_dir: ./output/ser_vi_layoutxlm_xfund_zh

|

||||

save_epoch_step: 2000

|

||||

# evaluation is run every 10 iterations after the 0th iteration

|

||||

eval_batch_step: [ 0, 57 ]

|

||||

eval_batch_step: [ 0, 19 ]

|

||||

cal_metric_during_train: False

|

||||

save_inference_dir:

|

||||

use_visualdl: False

|

||||

seed: 2022

|

||||

infer_img: train_data/FUNSD/testing_data/images/83624198.png

|

||||

save_res_path: ./output/ser_layoutlm_funsd/res/

|

||||

infer_img: ppstructure/docs/vqa/input/zh_val_42.jpg

|

||||

# if you want to predict using the groundtruth ocr info,

|

||||

# you can use the following config

|

||||

# infer_img: train_data/XFUND/zh_val/val.json

|

||||

# infer_mode: False

|

||||

|

||||

save_res_path: ./output/ser/xfund_zh/res

|

||||

|

||||

Architecture:

|

||||

model_type: vqa

|

||||

algorithm: &algorithm "LayoutLM"

|

||||

algorithm: &algorithm "LayoutXLM"

|

||||

Transform:

|

||||

Backbone:

|

||||

name: LayoutLMForSer

|

||||

name: LayoutXLMForSer

|

||||

pretrained: True

|

||||

checkpoints:

|

||||

# one of base or vi

|

||||

mode: vi

|

||||

num_classes: &num_classes 7

|

||||

|

||||

Loss:

|

||||

name: VQASerTokenLayoutLMLoss

|

||||

num_classes: *num_classes

|

||||

key: "backbone_out"

|

||||

|

||||

Optimizer:

|

||||

name: AdamW

|

||||

|

|

@ -43,7 +51,7 @@ Optimizer:

|

|||

|

||||

PostProcess:

|

||||

name: VQASerTokenLayoutLMPostProcess

|

||||

class_path: &class_path ./train_data/FUNSD/class_list.txt

|

||||

class_path: &class_path train_data/XFUND/class_list_xfun.txt

|

||||

|

||||

Metric:

|

||||

name: VQASerTokenMetric

|

||||

|

|

@ -52,9 +60,10 @@ Metric:

|

|||

Train:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: ./train_data/FUNSD/training_data/images/

|

||||

data_dir: train_data/XFUND/zh_train/image

|

||||

label_file_list:

|

||||

- ./train_data/FUNSD/train.json

|

||||

- train_data/XFUND/zh_train/train.json

|

||||

ratio_list: [ 1.0 ]

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

|

|

@ -64,6 +73,8 @@ Train:

|

|||

algorithm: *algorithm

|

||||

class_path: *class_path

|

||||

use_textline_bbox_info: &use_textline_bbox_info True

|

||||

# one of [None, "tb-yx"]

|

||||

order_method: &order_method "tb-yx"

|

||||

- VQATokenPad:

|

||||

max_seq_len: &max_seq_len 512

|

||||

return_attention_mask: True

|

||||

|

|

@ -78,8 +89,7 @@ Train:

|

|||

order: 'hwc'

|

||||

- ToCHWImage:

|

||||

- KeepKeys:

|

||||

# dataloader will return list in this order

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'labels']

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'labels'] # dataloader will return list in this order

|

||||

loader:

|

||||

shuffle: True

|

||||

drop_last: False

|

||||

|

|

@ -89,9 +99,9 @@ Train:

|

|||

Eval:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: train_data/FUNSD/testing_data/images/

|

||||

data_dir: train_data/XFUND/zh_val/image

|

||||

label_file_list:

|

||||

- ./train_data/FUNSD/test.json

|

||||

- train_data/XFUND/zh_val/val.json

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

|

|

@ -101,6 +111,7 @@ Eval:

|

|||

algorithm: *algorithm

|

||||

class_path: *class_path

|

||||

use_textline_bbox_info: *use_textline_bbox_info

|

||||

order_method: *order_method

|

||||

- VQATokenPad:

|

||||

max_seq_len: *max_seq_len

|

||||

return_attention_mask: True

|

||||

|

|

@ -115,8 +126,7 @@ Eval:

|

|||

order: 'hwc'

|

||||

- ToCHWImage:

|

||||

- KeepKeys:

|

||||

# dataloader will return list in this order

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'labels']

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'labels'] # dataloader will return list in this order

|

||||

loader:

|

||||

shuffle: False

|

||||

drop_last: False

|

||||

|

|

@ -0,0 +1,183 @@

|

|||

Global:

|

||||

use_gpu: True

|

||||

epoch_num: &epoch_num 200

|

||||

log_smooth_window: 10

|

||||

print_batch_step: 10

|

||||

save_model_dir: ./output/ser_vi_layoutxlm_xfund_zh_udml

|

||||

save_epoch_step: 2000

|

||||

# evaluation is run every 10 iterations after the 0th iteration

|

||||

eval_batch_step: [ 0, 19 ]

|

||||

cal_metric_during_train: False

|

||||

save_inference_dir:

|

||||

use_visualdl: False

|

||||

seed: 2022

|

||||

infer_img: ppstructure/docs/vqa/input/zh_val_42.jpg

|

||||

save_res_path: ./output/ser_layoutxlm_xfund_zh/res

|

||||

|

||||

|

||||

Architecture:

|

||||

model_type: &model_type "vqa"

|

||||

name: DistillationModel

|

||||

algorithm: Distillation

|

||||

Models:

|

||||

Teacher:

|

||||

pretrained:

|

||||

freeze_params: false

|

||||

return_all_feats: true

|

||||

model_type: *model_type

|

||||

algorithm: &algorithm "LayoutXLM"

|

||||

Transform:

|

||||

Backbone:

|

||||

name: LayoutXLMForSer

|

||||

pretrained: True

|

||||

# one of base or vi

|

||||

mode: vi

|

||||

checkpoints:

|

||||

num_classes: &num_classes 7

|

||||

Student:

|

||||

pretrained:

|

||||

freeze_params: false

|

||||

return_all_feats: true

|

||||

model_type: *model_type

|

||||

algorithm: *algorithm

|

||||

Transform:

|

||||

Backbone:

|

||||

name: LayoutXLMForSer

|

||||

pretrained: True

|

||||

# one of base or vi

|

||||

mode: vi

|

||||

checkpoints:

|

||||

num_classes: *num_classes

|

||||

|

||||

|

||||

Loss:

|

||||

name: CombinedLoss

|

||||

loss_config_list:

|

||||

- DistillationVQASerTokenLayoutLMLoss:

|

||||

weight: 1.0

|

||||

model_name_list: ["Student", "Teacher"]

|

||||

key: backbone_out

|

||||

num_classes: *num_classes

|

||||

- DistillationSERDMLLoss:

|

||||

weight: 1.0

|

||||

act: "softmax"

|

||||

use_log: true

|

||||

model_name_pairs:

|

||||

- ["Student", "Teacher"]

|

||||

key: backbone_out

|

||||

- DistillationVQADistanceLoss:

|

||||

weight: 0.5

|

||||

mode: "l2"

|

||||

model_name_pairs:

|

||||

- ["Student", "Teacher"]

|

||||

key: hidden_states_5

|

||||

name: "loss_5"

|

||||

- DistillationVQADistanceLoss:

|

||||

weight: 0.5

|

||||

mode: "l2"

|

||||

model_name_pairs:

|

||||

- ["Student", "Teacher"]

|

||||

key: hidden_states_8

|

||||

name: "loss_8"

|

||||

|

||||

|

||||

|

||||

Optimizer:

|

||||

name: AdamW

|

||||

beta1: 0.9

|

||||

beta2: 0.999

|

||||

lr:

|

||||

name: Linear

|

||||

learning_rate: 0.00005

|

||||

epochs: *epoch_num

|

||||

warmup_epoch: 10

|

||||

regularizer:

|

||||

name: L2

|

||||

factor: 0.00000

|

||||

|

||||

PostProcess:

|

||||

name: DistillationSerPostProcess

|

||||

model_name: ["Student", "Teacher"]

|

||||

key: backbone_out

|

||||

class_path: &class_path train_data/XFUND/class_list_xfun.txt

|

||||

|

||||

Metric:

|

||||

name: DistillationMetric

|

||||

base_metric_name: VQASerTokenMetric

|

||||

main_indicator: hmean

|

||||

key: "Student"

|

||||

|

||||

Train:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: train_data/XFUND/zh_train/image

|

||||

label_file_list:

|

||||

- train_data/XFUND/zh_train/train.json

|

||||

ratio_list: [ 1.0 ]

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

channel_first: False

|

||||

- VQATokenLabelEncode: # Class handling label

|

||||

contains_re: False

|

||||

algorithm: *algorithm

|

||||

class_path: *class_path

|

||||

# one of [None, "tb-yx"]

|

||||

order_method: &order_method "tb-yx"

|

||||

- VQATokenPad:

|

||||

max_seq_len: &max_seq_len 512

|

||||

return_attention_mask: True

|

||||

- VQASerTokenChunk:

|

||||

max_seq_len: *max_seq_len

|

||||

- Resize:

|

||||

size: [224,224]

|

||||

- NormalizeImage:

|

||||

scale: 1

|

||||

mean: [ 123.675, 116.28, 103.53 ]

|

||||

std: [ 58.395, 57.12, 57.375 ]

|

||||

order: 'hwc'

|

||||

- ToCHWImage:

|

||||

- KeepKeys:

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'labels'] # dataloader will return list in this order

|

||||

loader:

|

||||

shuffle: True

|

||||

drop_last: False

|

||||

batch_size_per_card: 4

|

||||

num_workers: 4

|

||||

|

||||

Eval:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: train_data/XFUND/zh_val/image

|

||||

label_file_list:

|

||||

- train_data/XFUND/zh_val/val.json

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

channel_first: False

|

||||

- VQATokenLabelEncode: # Class handling label

|

||||

contains_re: False

|

||||

algorithm: *algorithm

|

||||

class_path: *class_path

|

||||

order_method: *order_method

|

||||

- VQATokenPad:

|

||||

max_seq_len: *max_seq_len

|

||||

return_attention_mask: True

|

||||

- VQASerTokenChunk:

|

||||

max_seq_len: *max_seq_len

|

||||

- Resize:

|

||||

size: [224,224]

|

||||

- NormalizeImage:

|

||||

scale: 1

|

||||

mean: [ 123.675, 116.28, 103.53 ]

|

||||

std: [ 58.395, 57.12, 57.375 ]

|

||||

order: 'hwc'

|

||||

- ToCHWImage:

|

||||

- KeepKeys:

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'labels'] # dataloader will return list in this order

|

||||

loader:

|

||||

shuffle: False

|

||||

drop_last: False

|

||||

batch_size_per_card: 8

|

||||

num_workers: 4

|

||||

|

||||

|

|

@ -0,0 +1,106 @@

|

|||

Global:

|

||||

use_gpu: true

|

||||

epoch_num: 8

|

||||

log_smooth_window: 200

|

||||

print_batch_step: 200

|

||||

save_model_dir: ./output/rec/r45_visionlan

|

||||

save_epoch_step: 1

|

||||

# evaluation is run every 2000 iterations

|

||||

eval_batch_step: [0, 2000]

|

||||

cal_metric_during_train: True

|

||||

pretrained_model:

|

||||

checkpoints:

|

||||

save_inference_dir:

|

||||

use_visualdl: True

|

||||

infer_img: doc/imgs_words/en/word_2.png

|

||||

# for data or label process

|

||||

character_dict_path:

|

||||

max_text_length: &max_text_length 25

|

||||

training_step: &training_step LA

|

||||

infer_mode: False

|

||||

use_space_char: False

|

||||

save_res_path: ./output/rec/predicts_visionlan.txt

|

||||

|

||||

Optimizer:

|

||||

name: Adam

|

||||

beta1: 0.9

|

||||

beta2: 0.999

|

||||

clip_norm: 20.0

|

||||

group_lr: true

|

||||

training_step: *training_step

|

||||

lr:

|

||||

name: Piecewise

|

||||

decay_epochs: [6]

|

||||

values: [0.0001, 0.00001]

|

||||

regularizer:

|

||||

name: 'L2'

|

||||

factor: 0

|

||||

|

||||

Architecture:

|

||||

model_type: rec

|

||||

algorithm: VisionLAN

|

||||

Transform:

|

||||

Backbone:

|

||||

name: ResNet45

|

||||

strides: [2, 2, 2, 1, 1]

|

||||

Head:

|

||||

name: VLHead

|

||||

n_layers: 3

|

||||

n_position: 256

|

||||

n_dim: 512

|

||||

max_text_length: *max_text_length

|

||||

training_step: *training_step

|

||||

|

||||

Loss:

|

||||

name: VLLoss

|

||||

mode: *training_step

|

||||

weight_res: 0.5

|

||||

weight_mas: 0.5

|

||||

|

||||

PostProcess:

|

||||

name: VLLabelDecode

|

||||

|

||||

Metric:

|

||||

name: RecMetric

|

||||

is_filter: true

|

||||

|

||||

|

||||

Train:

|

||||

dataset:

|

||||

name: LMDBDataSet

|

||||

data_dir: ./train_data/data_lmdb_release/training/

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

channel_first: False

|

||||

- ABINetRecAug:

|

||||

- VLLabelEncode: # Class handling label

|

||||

- VLRecResizeImg:

|

||||

image_shape: [3, 64, 256]

|

||||

- KeepKeys:

|

||||

keep_keys: ['image', 'label', 'label_res', 'label_sub', 'label_id', 'length'] # dataloader will return list in this order

|

||||

loader:

|

||||

shuffle: True

|

||||

batch_size_per_card: 220

|

||||

drop_last: True

|

||||

num_workers: 4

|

||||

|

||||

Eval:

|

||||

dataset:

|

||||

name: LMDBDataSet

|

||||

data_dir: ./train_data/data_lmdb_release/validation/

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

channel_first: False

|

||||

- VLLabelEncode: # Class handling label

|

||||

- VLRecResizeImg:

|

||||

image_shape: [3, 64, 256]

|

||||

- KeepKeys:

|

||||

keep_keys: ['image', 'label', 'label_res', 'label_sub', 'label_id', 'length'] # dataloader will return list in this order

|

||||

loader:

|

||||

shuffle: False

|

||||

drop_last: False

|

||||

batch_size_per_card: 64

|

||||

num_workers: 4

|

||||

|

||||

|

|

@ -1,125 +0,0 @@

|

|||

Global:

|

||||

use_gpu: True

|

||||

epoch_num: &epoch_num 200

|

||||

log_smooth_window: 10

|

||||

print_batch_step: 10

|

||||

save_model_dir: ./output/re_layoutlmv2_funsd

|

||||

save_epoch_step: 2000

|

||||

# evaluation is run every 10 iterations after the 0th iteration

|

||||

eval_batch_step: [ 0, 57 ]

|

||||

cal_metric_during_train: False

|

||||

save_inference_dir:

|

||||

use_visualdl: False

|

||||

seed: 2022

|

||||

infer_img: train_data/FUNSD/testing_data/images/83624198.png

|

||||

save_res_path: ./output/re_layoutlmv2_funsd/res/

|

||||

|

||||

Architecture:

|

||||

model_type: vqa

|

||||

algorithm: &algorithm "LayoutLMv2"

|

||||

Transform:

|

||||

Backbone:

|

||||

name: LayoutLMv2ForRe

|

||||

pretrained: True

|

||||

checkpoints:

|

||||

|

||||

Loss:

|

||||

name: LossFromOutput

|

||||

key: loss

|

||||

reduction: mean

|

||||

|

||||

Optimizer:

|

||||

name: AdamW

|

||||

beta1: 0.9

|

||||

beta2: 0.999

|

||||

clip_norm: 10

|

||||

lr:

|

||||

learning_rate: 0.00005

|

||||

warmup_epoch: 10

|

||||

regularizer:

|

||||

name: L2

|

||||

factor: 0.00000

|

||||

|

||||

PostProcess:

|

||||

name: VQAReTokenLayoutLMPostProcess

|

||||

|

||||

Metric:

|

||||

name: VQAReTokenMetric

|

||||

main_indicator: hmean

|

||||

|

||||

Train:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: ./train_data/FUNSD/training_data/images/

|

||||

label_file_list:

|

||||

- ./train_data/FUNSD/train.json

|

||||

ratio_list: [ 1.0 ]

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

channel_first: False

|

||||

- VQATokenLabelEncode: # Class handling label

|

||||

contains_re: True

|

||||

algorithm: *algorithm

|

||||

class_path: &class_path train_data/FUNSD/class_list.txt

|

||||

- VQATokenPad:

|

||||

max_seq_len: &max_seq_len 512

|

||||

return_attention_mask: True

|

||||

- VQAReTokenRelation:

|

||||

- VQAReTokenChunk:

|

||||

max_seq_len: *max_seq_len

|

||||

- Resize:

|

||||

size: [224,224]

|

||||

- NormalizeImage:

|

||||

scale: 1./255.

|

||||

mean: [0.485, 0.456, 0.406]

|

||||

std: [0.229, 0.224, 0.225]

|

||||

order: 'hwc'

|

||||

- ToCHWImage:

|

||||

- KeepKeys:

|

||||

# dataloader will return list in this order

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'entities', 'relations']

|

||||

loader:

|

||||

shuffle: True

|

||||

drop_last: False

|

||||

batch_size_per_card: 8

|

||||

num_workers: 8

|

||||

collate_fn: ListCollator

|

||||

|

||||

Eval:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: ./train_data/FUNSD/testing_data/images/

|

||||

label_file_list:

|

||||

- ./train_data/FUNSD/test.json

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

channel_first: False

|

||||

- VQATokenLabelEncode: # Class handling label

|

||||

contains_re: True

|

||||

algorithm: *algorithm

|

||||

class_path: *class_path

|

||||

- VQATokenPad:

|

||||

max_seq_len: *max_seq_len

|

||||

return_attention_mask: True

|

||||

- VQAReTokenRelation:

|

||||

- VQAReTokenChunk:

|

||||

max_seq_len: *max_seq_len

|

||||

- Resize:

|

||||

size: [224,224]

|

||||

- NormalizeImage:

|

||||

scale: 1./255.

|

||||

mean: [0.485, 0.456, 0.406]

|

||||

std: [0.229, 0.224, 0.225]

|

||||

order: 'hwc'

|

||||

- ToCHWImage:

|

||||

- KeepKeys:

|

||||

# dataloader will return list in this order

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'entities', 'relations']

|

||||

loader:

|

||||

shuffle: False

|

||||

drop_last: False

|

||||

batch_size_per_card: 8

|

||||

num_workers: 8

|

||||

collate_fn: ListCollator

|

||||

|

|

@ -1,124 +0,0 @@

|

|||

Global:

|

||||

use_gpu: True

|

||||

epoch_num: &epoch_num 200

|

||||

log_smooth_window: 10

|

||||

print_batch_step: 10

|

||||

save_model_dir: ./output/ser_layoutlm_sroie

|

||||

save_epoch_step: 2000

|

||||

# evaluation is run every 10 iterations after the 0th iteration

|

||||

eval_batch_step: [ 0, 200 ]

|

||||

cal_metric_during_train: False

|

||||

save_inference_dir:

|

||||

use_visualdl: False

|

||||

seed: 2022

|

||||

infer_img: train_data/SROIE/test/X00016469670.jpg

|

||||

save_res_path: ./output/ser_layoutlm_sroie/res/

|

||||

|

||||

Architecture:

|

||||

model_type: vqa

|

||||

algorithm: &algorithm "LayoutLM"

|

||||

Transform:

|

||||

Backbone:

|

||||

name: LayoutLMForSer

|

||||

pretrained: True

|

||||

checkpoints:

|

||||

num_classes: &num_classes 9

|

||||

|

||||

Loss:

|

||||

name: VQASerTokenLayoutLMLoss

|

||||

num_classes: *num_classes

|

||||

|

||||

Optimizer:

|

||||

name: AdamW

|

||||

beta1: 0.9

|

||||

beta2: 0.999

|

||||

lr:

|

||||

name: Linear

|

||||

learning_rate: 0.00005

|

||||

epochs: *epoch_num

|

||||

warmup_epoch: 2

|

||||

regularizer:

|

||||

name: L2

|

||||

factor: 0.00000

|

||||

|

||||

PostProcess:

|

||||

name: VQASerTokenLayoutLMPostProcess

|

||||

class_path: &class_path ./train_data/SROIE/class_list.txt

|

||||

|

||||

Metric:

|

||||

name: VQASerTokenMetric

|

||||

main_indicator: hmean

|

||||

|

||||

Train:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: ./train_data/SROIE/train

|

||||

label_file_list:

|

||||

- ./train_data/SROIE/train.txt

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

channel_first: False

|

||||

- VQATokenLabelEncode: # Class handling label

|

||||

contains_re: False

|

||||

algorithm: *algorithm

|

||||

class_path: *class_path

|

||||

use_textline_bbox_info: &use_textline_bbox_info True

|

||||

- VQATokenPad:

|

||||

max_seq_len: &max_seq_len 512

|

||||

return_attention_mask: True

|

||||

- VQASerTokenChunk:

|

||||

max_seq_len: *max_seq_len

|

||||

- Resize:

|

||||

size: [224,224]

|

||||

- NormalizeImage:

|

||||

scale: 1

|

||||

mean: [ 123.675, 116.28, 103.53 ]

|

||||

std: [ 58.395, 57.12, 57.375 ]

|

||||

order: 'hwc'

|

||||

- ToCHWImage:

|

||||

- KeepKeys:

|

||||

# dataloader will return list in this order

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'labels']

|

||||

loader:

|

||||

shuffle: True

|

||||

drop_last: False

|

||||

batch_size_per_card: 8

|

||||

num_workers: 4

|

||||

|

||||

Eval:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: ./train_data/SROIE/test

|

||||

label_file_list:

|

||||

- ./train_data/SROIE/test.txt

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

channel_first: False

|

||||

- VQATokenLabelEncode: # Class handling label

|

||||

contains_re: False

|

||||

algorithm: *algorithm

|

||||

class_path: *class_path

|

||||

use_textline_bbox_info: *use_textline_bbox_info

|

||||

- VQATokenPad:

|

||||

max_seq_len: *max_seq_len

|

||||

return_attention_mask: True

|

||||

- VQASerTokenChunk:

|

||||

max_seq_len: *max_seq_len

|

||||

- Resize:

|

||||

size: [224,224]

|

||||

- NormalizeImage:

|

||||

scale: 1

|

||||

mean: [ 123.675, 116.28, 103.53 ]

|

||||

std: [ 58.395, 57.12, 57.375 ]

|

||||

order: 'hwc'

|

||||

- ToCHWImage:

|

||||

- KeepKeys:

|

||||

# dataloader will return list in this order

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'labels']

|

||||

loader:

|

||||

shuffle: False

|

||||

drop_last: False

|

||||

batch_size_per_card: 8

|

||||

num_workers: 4

|

||||

|

|

@ -1,123 +0,0 @@

|

|||

Global:

|

||||

use_gpu: True

|

||||

epoch_num: &epoch_num 200

|

||||

log_smooth_window: 10

|

||||

print_batch_step: 10

|

||||

save_model_dir: ./output/ser_layoutlmv2_funsd

|

||||

save_epoch_step: 2000

|

||||

# evaluation is run every 10 iterations after the 0th iteration

|

||||

eval_batch_step: [ 0, 100 ]

|

||||

cal_metric_during_train: False

|

||||

save_inference_dir:

|

||||

use_visualdl: False

|

||||

seed: 2022

|

||||

infer_img: train_data/FUNSD/testing_data/images/83624198.png

|

||||

save_res_path: ./output/ser_layoutlmv2_funsd/res/

|

||||

|

||||

Architecture:

|

||||

model_type: vqa

|

||||

algorithm: &algorithm "LayoutLMv2"

|

||||

Transform:

|

||||

Backbone:

|

||||

name: LayoutLMv2ForSer

|

||||

pretrained: True

|

||||

checkpoints:

|

||||

num_classes: &num_classes 7

|

||||

|

||||

Loss:

|

||||

name: VQASerTokenLayoutLMLoss

|

||||

num_classes: *num_classes

|

||||

|

||||

Optimizer:

|

||||

name: AdamW

|

||||

beta1: 0.9

|

||||

beta2: 0.999

|

||||

lr:

|

||||

name: Linear

|

||||

learning_rate: 0.00005

|

||||

epochs: *epoch_num

|

||||

warmup_epoch: 2

|

||||

regularizer:

|

||||

|

||||

name: L2

|

||||

factor: 0.00000

|

||||

|

||||

PostProcess:

|

||||

name: VQASerTokenLayoutLMPostProcess

|

||||

class_path: &class_path train_data/FUNSD/class_list.txt

|

||||

|

||||

Metric:

|

||||

name: VQASerTokenMetric

|

||||

main_indicator: hmean

|

||||

|

||||

Train:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: ./train_data/FUNSD/training_data/images/

|

||||

label_file_list:

|

||||

- ./train_data/FUNSD/train.json

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

channel_first: False

|

||||

- VQATokenLabelEncode: # Class handling label

|

||||

contains_re: False

|

||||

algorithm: *algorithm

|

||||

class_path: *class_path

|

||||

- VQATokenPad:

|

||||

max_seq_len: &max_seq_len 512

|

||||

return_attention_mask: True

|

||||

- VQASerTokenChunk:

|

||||

max_seq_len: *max_seq_len

|

||||

- Resize:

|

||||

size: [224,224]

|

||||

- NormalizeImage:

|

||||

scale: 1

|

||||

mean: [ 123.675, 116.28, 103.53 ]

|

||||

std: [ 58.395, 57.12, 57.375 ]

|

||||

order: 'hwc'

|

||||

- ToCHWImage:

|

||||

- KeepKeys:

|

||||

# dataloader will return list in this order

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'labels']

|

||||

loader:

|

||||

shuffle: True

|

||||

drop_last: False

|

||||

batch_size_per_card: 8

|

||||

num_workers: 4

|

||||

|

||||

Eval:

|

||||

dataset:

|

||||

name: SimpleDataSet

|

||||

data_dir: ./train_data/FUNSD/testing_data/images/

|

||||

label_file_list:

|

||||

- ./train_data/FUNSD/test.json

|

||||

transforms:

|

||||

- DecodeImage: # load image

|

||||

img_mode: RGB

|

||||

channel_first: False

|

||||

- VQATokenLabelEncode: # Class handling label

|

||||

contains_re: False

|

||||

algorithm: *algorithm

|

||||

class_path: *class_path

|

||||

- VQATokenPad:

|

||||

max_seq_len: *max_seq_len

|

||||

return_attention_mask: True

|

||||

- VQASerTokenChunk:

|

||||

max_seq_len: *max_seq_len

|

||||

- Resize:

|

||||

size: [224,224]

|

||||

- NormalizeImage:

|

||||

scale: 1

|

||||

mean: [ 123.675, 116.28, 103.53 ]

|

||||

std: [ 58.395, 57.12, 57.375 ]

|

||||

order: 'hwc'

|

||||

- ToCHWImage:

|

||||

- KeepKeys:

|

||||

# dataloader will return list in this order

|

||||

keep_keys: [ 'input_ids', 'bbox', 'attention_mask', 'token_type_ids', 'image', 'labels']

|

||||

loader:

|

||||

shuffle: False

|

||||

drop_last: False

|

||||

batch_size_per_card: 8

|

||||

num_workers: 4

|

||||

|

|

@ -1,123 +0,0 @@

|

|||

Global:

|

||||

use_gpu: True

|

||||