514 lines

48 KiB

Markdown

514 lines

48 KiB

Markdown

<div align="center">

|

||

<p>

|

||

<a href="https://www.ultralytics.com/blog/all-you-need-to-know-about-ultralytics-yolo11-and-its-applications" target="_blank">

|

||

<img width="100%" src="https://raw.githubusercontent.com/ultralytics/assets/main/yolov8/banner-yolov8.png" alt="Ultralytics YOLO 横幅"></a>

|

||

</p>

|

||

|

||

[中文](https://docs.ultralytics.com/zh) | [한국어](https://docs.ultralytics.com/ko) | [日本語](https://docs.ultralytics.com/ja) | [Русский](https://docs.ultralytics.com/ru) | [Deutsch](https://docs.ultralytics.com/de) | [Français](https://docs.ultralytics.com/fr) | [Español](https://docs.ultralytics.com/es) | [Português](https://docs.ultralytics.com/pt) | [Türkçe](https://docs.ultralytics.com/tr) | [Tiếng Việt](https://docs.ultralytics.com/vi) | [العربية](https://docs.ultralytics.com/ar)

|

||

|

||

<div>

|

||

<a href="https://github.com/ultralytics/yolov5/actions/workflows/ci-testing.yml"><img src="https://github.com/ultralytics/yolov5/actions/workflows/ci-testing.yml/badge.svg" alt="YOLOv5 CI 测试"></a>

|

||

<a href="https://zenodo.org/badge/latestdoi/264818686"><img src="https://zenodo.org/badge/264818686.svg" alt="YOLOv5 引用"></a>

|

||

<a href="https://hub.docker.com/r/ultralytics/yolov5"><img src="https://img.shields.io/docker/pulls/ultralytics/yolov5?logo=docker" alt="Docker 拉取次数"></a>

|

||

<a href="https://discord.com/invite/ultralytics"><img alt="Discord" src="https://img.shields.io/discord/1089800235347353640?logo=discord&logoColor=white&label=Discord&color=blue"></a> <a href="https://community.ultralytics.com/"><img alt="Ultralytics 论坛" src="https://img.shields.io/discourse/users?server=https%3A%2F%2Fcommunity.ultralytics.com&logo=discourse&label=Forums&color=blue"></a> <a href="https://reddit.com/r/ultralytics"><img alt="Ultralytics Reddit" src="https://img.shields.io/reddit/subreddit-subscribers/ultralytics?style=flat&logo=reddit&logoColor=white&label=Reddit&color=blue"></a>

|

||

<br>

|

||

<a href="https://bit.ly/yolov5-paperspace-notebook"><img src="https://assets.paperspace.io/img/gradient-badge.svg" alt="在 Gradient 上运行"></a>

|

||

<a href="https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="在 Colab 中打开"></a>

|

||

<a href="https://www.kaggle.com/models/ultralytics/yolov5"><img src="https://kaggle.com/static/images/open-in-kaggle.svg" alt="在 Kaggle 中打开"></a>

|

||

</div>

|

||

<br>

|

||

|

||

Ultralytics YOLOv5 🚀 是由 [Ultralytics](https://www.ultralytics.com/) 开发的尖端、达到业界顶尖水平(SOTA)的计算机视觉模型。基于 [PyTorch](https://pytorch.org/) 框架,YOLOv5 以其易用性、速度和准确性而闻名。它融合了广泛研究和开发的见解与最佳实践,使其成为各种视觉 AI 任务的热门选择,包括[目标检测](https://docs.ultralytics.com/tasks/detect/)、[图像分割](https://docs.ultralytics.com/tasks/segment/)和[图像分类](https://docs.ultralytics.com/tasks/classify/)。

|

||

|

||

我们希望这里的资源能帮助您充分利用 YOLOv5。请浏览 [YOLOv5 文档](https://docs.ultralytics.com/yolov5/)获取详细信息,在 [GitHub](https://github.com/ultralytics/yolov5/issues/new/choose) 上提出 issue 以获得支持,并加入我们的 [Discord 社区](https://discord.com/invite/ultralytics)进行提问和讨论!

|

||

|

||

如需申请企业许可证,请填写 [Ultralytics 授权许可](https://www.ultralytics.com/license) 表格。

|

||

|

||

<div align="center">

|

||

<a href="https://github.com/ultralytics"><img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-github.png" width="2%" alt="Ultralytics GitHub"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="2%" alt="space">

|

||

<a href="https://www.linkedin.com/company/ultralytics/"><img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-linkedin.png" width="2%" alt="Ultralytics LinkedIn"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="2%" alt="space">

|

||

<a href="https://twitter.com/ultralytics"><img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-twitter.png" width="2%" alt="Ultralytics Twitter"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="2%" alt="space">

|

||

<a href="https://youtube.com/ultralytics?sub_confirmation=1"><img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-youtube.png" width="2%" alt="Ultralytics YouTube"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="2%" alt="space">

|

||

<a href="https://www.tiktok.com/@ultralytics"><img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-tiktok.png" width="2%" alt="Ultralytics TikTok"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="2%" alt="space">

|

||

<a href="https://ultralytics.com/bilibili"><img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-bilibili.png" width="2%" alt="Ultralytics BiliBili"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="2%" alt="space">

|

||

<a href="https://discord.com/invite/ultralytics"><img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-discord.png" width="2%" alt="Ultralytics Discord"></a>

|

||

</div>

|

||

|

||

</div>

|

||

<br>

|

||

|

||

## 🚀 YOLO11:下一代进化

|

||

|

||

我们激动地宣布推出 **Ultralytics YOLO11** 🚀,这是我们业界顶尖(SOTA)视觉模型的最新进展!YOLO11 现已在 [Ultralytics YOLO GitHub 仓库](https://github.com/ultralytics/ultralytics)发布,它继承了我们速度快、精度高和易于使用的传统。无论您是处理[目标检测](https://docs.ultralytics.com/tasks/detect/)、[实例分割](https://docs.ultralytics.com/tasks/segment/)、[姿态估计](https://docs.ultralytics.com/tasks/pose/)、[图像分类](https://docs.ultralytics.com/tasks/classify/)还是[旋转目标检测 (OBB)](https://docs.ultralytics.com/tasks/obb/),YOLO11 都能提供在多样化应用中脱颖而出所需的性能和多功能性。

|

||

|

||

立即开始,释放 YOLO11 的全部潜力!访问 [Ultralytics 文档](https://docs.ultralytics.com/)获取全面的指南和资源:

|

||

|

||

[](https://badge.fury.io/py/ultralytics) [](https://www.pepy.tech/projects/ultralytics)

|

||

|

||

```bash

|

||

# 安装 ultralytics 包

|

||

pip install ultralytics

|

||

```

|

||

|

||

<div align="center">

|

||

<a href="https://www.ultralytics.com/yolo" target="_blank">

|

||

<img width="100%" src="https://raw.githubusercontent.com/ultralytics/assets/refs/heads/main/yolo/performance-comparison.png" alt="Ultralytics YOLO 性能比较"></a>

|

||

</div>

|

||

|

||

## 📚 文档

|

||

|

||

请参阅 [YOLOv5 文档](https://docs.ultralytics.com/yolov5/),了解有关训练、测试和部署的完整文档。请参阅下方的快速入门示例。

|

||

|

||

<details open>

|

||

<summary>安装</summary>

|

||

|

||

克隆仓库并在 [**Python>=3.8.0**](https://www.python.org/) 环境中安装依赖项。确保您已安装 [**PyTorch>=1.8**](https://pytorch.org/get-started/locally/)。

|

||

|

||

```bash

|

||

# 克隆 YOLOv5 仓库

|

||

git clone https://github.com/ultralytics/yolov5

|

||

|

||

# 导航到克隆的目录

|

||

cd yolov5

|

||

|

||

# 安装所需的包

|

||

pip install -r requirements.txt

|

||

```

|

||

|

||

</details>

|

||

|

||

<details open>

|

||

<summary>使用 PyTorch Hub 进行推理</summary>

|

||

|

||

通过 [PyTorch Hub](https://docs.ultralytics.com/yolov5/tutorials/pytorch_hub_model_loading/) 使用 YOLOv5 进行推理。[模型](https://github.com/ultralytics/yolov5/tree/master/models) 会自动从最新的 YOLOv5 [发布版本](https://github.com/ultralytics/yolov5/releases)下载。

|

||

|

||

```python

|

||

import torch

|

||

|

||

# 加载 YOLOv5 模型(选项:yolov5n, yolov5s, yolov5m, yolov5l, yolov5x)

|

||

model = torch.hub.load("ultralytics/yolov5", "yolov5s") # 默认:yolov5s

|

||

|

||

# 定义输入图像源(URL、本地文件、PIL 图像、OpenCV 帧、numpy 数组或列表)

|

||

img = "https://ultralytics.com/images/zidane.jpg" # 示例图像

|

||

|

||

# 执行推理(自动处理批处理、调整大小、归一化)

|

||

results = model(img)

|

||

|

||

# 处理结果(选项:.print(), .show(), .save(), .crop(), .pandas())

|

||

results.print() # 将结果打印到控制台

|

||

results.show() # 在窗口中显示结果

|

||

results.save() # 将结果保存到 runs/detect/exp

|

||

```

|

||

|

||

</details>

|

||

|

||

<details>

|

||

<summary>使用 detect.py 进行推理</summary>

|

||

|

||

`detect.py` 脚本在各种来源上运行推理。它会自动从最新的 YOLOv5 [发布版本](https://github.com/ultralytics/yolov5/releases)下载[模型](https://github.com/ultralytics/yolov5/tree/master/models),并将结果保存到 `runs/detect` 目录。

|

||

|

||

```bash

|

||

# 使用网络摄像头运行推理

|

||

python detect.py --weights yolov5s.pt --source 0

|

||

|

||

# 对本地图像文件运行推理

|

||

python detect.py --weights yolov5s.pt --source img.jpg

|

||

|

||

# 对本地视频文件运行推理

|

||

python detect.py --weights yolov5s.pt --source vid.mp4

|

||

|

||

# 对屏幕截图运行推理

|

||

python detect.py --weights yolov5s.pt --source screen

|

||

|

||

# 对图像目录运行推理

|

||

python detect.py --weights yolov5s.pt --source path/to/images/

|

||

|

||

# 对列出图像路径的文本文件运行推理

|

||

python detect.py --weights yolov5s.pt --source list.txt

|

||

|

||

# 对列出流 URL 的文本文件运行推理

|

||

python detect.py --weights yolov5s.pt --source list.streams

|

||

|

||

# 使用 glob 模式对图像运行推理

|

||

python detect.py --weights yolov5s.pt --source 'path/to/*.jpg'

|

||

|

||

# 对 YouTube 视频 URL 运行推理

|

||

python detect.py --weights yolov5s.pt --source 'https://youtu.be/LNwODJXcvt4'

|

||

|

||

# 对 RTSP、RTMP 或 HTTP 流运行推理

|

||

python detect.py --weights yolov5s.pt --source 'rtsp://example.com/media.mp4'

|

||

```

|

||

|

||

</details>

|

||

|

||

<details>

|

||

<summary>训练</summary>

|

||

|

||

以下命令演示了如何复现 YOLOv5 在 [COCO 数据集](https://docs.ultralytics.com/datasets/detect/coco/)上的结果。[模型](https://github.com/ultralytics/yolov5/tree/master/models)和[数据集](https://github.com/ultralytics/yolov5/tree/master/data)都会自动从最新的 YOLOv5 [发布版本](https://github.com/ultralytics/yolov5/releases)下载。YOLOv5n/s/m/l/x 的训练时间在单个 [NVIDIA V100 GPU](https://www.nvidia.com/en-us/data-center/v100/) 上大约需要 1/2/4/6/8 天。使用[多 GPU 训练](https://docs.ultralytics.com/yolov5/tutorials/multi_gpu_training/)可以显著减少训练时间。请使用硬件允许的最大 `--batch-size`,或使用 `--batch-size -1` 以启用 YOLOv5 [AutoBatch](https://github.com/ultralytics/yolov5/pull/5092)。下面显示的批处理大小适用于 V100-16GB GPU。

|

||

|

||

```bash

|

||

# 在 COCO 上训练 YOLOv5n 300 个周期

|

||

python train.py --data coco.yaml --epochs 300 --weights '' --cfg yolov5n.yaml --batch-size 128

|

||

|

||

# 在 COCO 上训练 YOLOv5s 300 个周期

|

||

python train.py --data coco.yaml --epochs 300 --weights '' --cfg yolov5s.yaml --batch-size 64

|

||

|

||

# 在 COCO 上训练 YOLOv5m 300 个周期

|

||

python train.py --data coco.yaml --epochs 300 --weights '' --cfg yolov5m.yaml --batch-size 40

|

||

|

||

# 在 COCO 上训练 YOLOv5l 300 个周期

|

||

python train.py --data coco.yaml --epochs 300 --weights '' --cfg yolov5l.yaml --batch-size 24

|

||

|

||

# 在 COCO 上训练 YOLOv5x 300 个周期

|

||

python train.py --data coco.yaml --epochs 300 --weights '' --cfg yolov5x.yaml --batch-size 16

|

||

```

|

||

|

||

<img width="800" src="https://user-images.githubusercontent.com/26833433/90222759-949d8800-ddc1-11ea-9fa1-1c97eed2b963.png" alt="YOLOv5 训练结果">

|

||

|

||

</details>

|

||

|

||

<details open>

|

||

<summary>教程</summary>

|

||

|

||

- **[训练自定义数据](https://docs.ultralytics.com/yolov5/tutorials/train_custom_data/)** 🚀 **推荐**:学习如何在您自己的数据集上训练 YOLOv5。

|

||

- **[获得最佳训练结果的技巧](https://docs.ultralytics.com/guides/model-training-tips/)** ☘️:利用专家技巧提升模型性能。

|

||

- **[多 GPU 训练](https://docs.ultralytics.com/yolov5/tutorials/multi_gpu_training/)**:使用多个 GPU 加速训练。

|

||

- **[PyTorch Hub 集成](https://docs.ultralytics.com/yolov5/tutorials/pytorch_hub_model_loading/)** 🌟 **新增**:使用 PyTorch Hub 轻松加载模型。

|

||

- **[模型导出 (TFLite, ONNX, CoreML, TensorRT)](https://docs.ultralytics.com/yolov5/tutorials/model_export/)** 🚀:将您的模型转换为各种部署格式,如 [ONNX](https://onnx.ai/) 或 [TensorRT](https://developer.nvidia.com/tensorrt)。

|

||

- **[NVIDIA Jetson 部署](https://docs.ultralytics.com/guides/nvidia-jetson/)** 🌟 **新增**:在 [NVIDIA Jetson](https://developer.nvidia.com/embedded-computing) 设备上部署 YOLOv5。

|

||

- **[测试时增强 (TTA)](https://docs.ultralytics.com/yolov5/tutorials/test_time_augmentation/)**:使用 TTA 提高预测准确性。

|

||

- **[模型集成](https://docs.ultralytics.com/yolov5/tutorials/model_ensembling/)**:组合多个模型以获得更好的性能。

|

||

- **[模型剪枝/稀疏化](https://docs.ultralytics.com/yolov5/tutorials/model_pruning_and_sparsity/)**:优化模型的大小和速度。

|

||

- **[超参数进化](https://docs.ultralytics.com/yolov5/tutorials/hyperparameter_evolution/)**:自动找到最佳训练超参数。

|

||

- **[使用冻结层的迁移学习](https://docs.ultralytics.com/yolov5/tutorials/transfer_learning_with_frozen_layers/)**:使用[迁移学习](https://www.ultralytics.com/glossary/transfer-learning)高效地将预训练模型应用于新任务。

|

||

- **[架构摘要](https://docs.ultralytics.com/yolov5/tutorials/architecture_description/)** 🌟 **新增**:了解 YOLOv5 模型架构。

|

||

- **[Ultralytics HUB 训练](https://www.ultralytics.com/hub)** 🚀 **推荐**:使用 Ultralytics HUB 训练和部署 YOLO 模型。

|

||

- **[ClearML 日志记录](https://docs.ultralytics.com/yolov5/tutorials/clearml_logging_integration/)**:与 [ClearML](https://clear.ml/) 集成以进行实验跟踪。

|

||

- **[Neural Magic DeepSparse 集成](https://docs.ultralytics.com/yolov5/tutorials/neural_magic_pruning_quantization/)**:使用 DeepSparse 加速推理。

|

||

- **[Comet 日志记录](https://docs.ultralytics.com/yolov5/tutorials/comet_logging_integration/)** 🌟 **新增**:使用 [Comet ML](https://www.comet.com/site/) 记录实验。

|

||

|

||

</details>

|

||

|

||

## 🧩 集成

|

||

|

||

我们与领先 AI 平台的关键集成扩展了 Ultralytics 产品的功能,增强了诸如数据集标注、训练、可视化和模型管理等任务。了解 Ultralytics 如何与 [Weights & Biases](https://docs.ultralytics.com/integrations/weights-biases/)、[Comet ML](https://docs.ultralytics.com/integrations/comet/)、[Roboflow](https://docs.ultralytics.com/integrations/roboflow/) 和 [Intel OpenVINO](https://docs.ultralytics.com/integrations/openvino/) 等合作伙伴协作,优化您的 AI 工作流程。在 [Ultralytics 集成](https://docs.ultralytics.com/integrations/) 探索更多信息。

|

||

|

||

<a href="https://docs.ultralytics.com/integrations/" target="_blank">

|

||

<img width="100%" src="https://github.com/ultralytics/assets/raw/main/yolov8/banner-integrations.png" alt="Ultralytics 主动学习集成">

|

||

</a>

|

||

<br>

|

||

<br>

|

||

|

||

<div align="center">

|

||

<a href="https://www.ultralytics.com/hub">

|

||

<img src="https://github.com/ultralytics/assets/raw/main/partners/logo-ultralytics-hub.png" width="10%" alt="Ultralytics HUB logo"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="15%" height="0" alt="space">

|

||

<a href="https://docs.ultralytics.com/integrations/weights-biases/">

|

||

<img src="https://github.com/ultralytics/assets/raw/main/partners/logo-wb.png" width="10%" alt="Weights & Biases logo"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="15%" height="0" alt="space">

|

||

<a href="https://docs.ultralytics.com/integrations/comet/">

|

||

<img src="https://github.com/ultralytics/assets/raw/main/partners/logo-comet.png" width="10%" alt="Comet ML logo"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="15%" height="0" alt="space">

|

||

<a href="https://docs.ultralytics.com/integrations/neural-magic/">

|

||

<img src="https://github.com/ultralytics/assets/raw/main/partners/logo-neuralmagic.png" width="10%" alt="Neural Magic logo"></a>

|

||

</div>

|

||

|

||

| Ultralytics HUB 🌟 | Weights & Biases | Comet | Neural Magic |

|

||

| :-------------------------------------------------------------------------------------------------------: | :---------------------------------------------------------------------------------------------------------: | :------------------------------------------------------------------------------------------------------------------------: | :---------------------------------------------------------------------------------------------------------------------: |

|

||

| 简化 YOLO 工作流程:使用 [Ultralytics HUB](https://hub.ultralytics.com/) 轻松标注、训练和部署。立即试用! | 使用 [Weights & Biases](https://docs.ultralytics.com/integrations/weights-biases/) 跟踪实验、超参数和结果。 | 永久免费的 [Comet ML](https://docs.ultralytics.com/integrations/comet/) 让您保存 YOLO 模型、恢复训练并交互式地可视化预测。 | 使用 [Neural Magic DeepSparse](https://docs.ultralytics.com/integrations/neural-magic/) 将 YOLO 推理速度提高多达 6 倍。 |

|

||

|

||

## ⭐ Ultralytics HUB

|

||

|

||

通过 [Ultralytics HUB](https://www.ultralytics.com/hub) ⭐ 体验无缝的 AI 开发,这是构建、训练和部署[计算机视觉](https://www.ultralytics.com/glossary/computer-vision-cv)模型的终极平台。可视化数据集,训练 [YOLOv5](https://docs.ultralytics.com/models/yolov5/) 和 [YOLOv8](https://docs.ultralytics.com/models/yolov8/) 🚀 模型,并将它们部署到实际应用中,无需编写任何代码。使用我们尖端的工具和用户友好的 [Ultralytics App](https://www.ultralytics.com/app-install) 将图像转化为可操作的见解。今天就**免费**开始您的旅程吧!

|

||

|

||

<a align="center" href="https://www.ultralytics.com/hub" target="_blank">

|

||

<img width="100%" src="https://github.com/ultralytics/assets/raw/main/im/ultralytics-hub.png" alt="Ultralytics HUB 平台截图"></a>

|

||

|

||

## 🤔 为何选择 YOLOv5?

|

||

|

||

YOLOv5 的设计旨在简单易用。我们优先考虑实际性能和可访问性。

|

||

|

||

<p align="left"><img width="800" src="https://user-images.githubusercontent.com/26833433/155040763-93c22a27-347c-4e3c-847a-8094621d3f4e.png" alt="YOLOv5 性能图表"></p>

|

||

<details>

|

||

<summary>YOLOv5-P5 640 图表</summary>

|

||

|

||

<p align="left"><img width="800" src="https://user-images.githubusercontent.com/26833433/155040757-ce0934a3-06a6-43dc-a979-2edbbd69ea0e.png" alt="YOLOv5 P5 640 性能图表"></p>

|

||

</details>

|

||

<details>

|

||

<summary>图表说明</summary>

|

||

|

||

- **COCO AP val** 表示在 [交并比 (IoU)](https://www.ultralytics.com/glossary/intersection-over-union-iou) 阈值从 0.5 到 0.95 范围内的[平均精度均值 (mAP)](https://www.ultralytics.com/glossary/mean-average-precision-map),在包含 5000 张图像的 [COCO val2017 数据集](https://docs.ultralytics.com/datasets/detect/coco/)上,使用各种推理尺寸(256 到 1536 像素)测量得出。

|

||

- **GPU Speed** 使用批处理大小为 32 的 [AWS p3.2xlarge V100 实例](https://aws.amazon.com/ec2/instance-types/p4/),测量在 [COCO val2017 数据集](https://docs.ultralytics.com/datasets/detect/coco/)上每张图像的平均推理时间。

|

||

- **EfficientDet** 数据来源于 [google/automl 仓库](https://github.com/google/automl),批处理大小为 8。

|

||

- **复现**这些结果请使用命令:`python val.py --task study --data coco.yaml --iou 0.7 --weights yolov5n6.pt yolov5s6.pt yolov5m6.pt yolov5l6.pt yolov5x6.pt`

|

||

|

||

</details>

|

||

|

||

### 预训练权重

|

||

|

||

此表显示了在 COCO 数据集上训练的各种 YOLOv5 模型的性能指标。

|

||

|

||

| 模型 | 尺寸<br><sup>(像素) | mAP<sup>val<br>50-95 | mAP<sup>val<br>50 | 速度<br><sup>CPU b1<br>(毫秒) | 速度<br><sup>V100 b1<br>(毫秒) | 速度<br><sup>V100 b32<br>(毫秒) | 参数<br><sup>(M) | FLOPs<br><sup>@640 (B) |

|

||

| ------------------------------------------------------------------------------------------------------------------------------------------------------------------------ | ------------------- | -------------------- | ----------------- | ----------------------------- | ------------------------------ | ------------------------------- | ---------------- | ---------------------- |

|

||

| [YOLOv5n](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5n.pt) | 640 | 28.0 | 45.7 | **45** | **6.3** | **0.6** | **1.9** | **4.5** |

|

||

| [YOLOv5s](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5s.pt) | 640 | 37.4 | 56.8 | 98 | 6.4 | 0.9 | 7.2 | 16.5 |

|

||

| [YOLOv5m](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5m.pt) | 640 | 45.4 | 64.1 | 224 | 8.2 | 1.7 | 21.2 | 49.0 |

|

||

| [YOLOv5l](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5l.pt) | 640 | 49.0 | 67.3 | 430 | 10.1 | 2.7 | 46.5 | 109.1 |

|

||

| [YOLOv5x](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5x.pt) | 640 | 50.7 | 68.9 | 766 | 12.1 | 4.8 | 86.7 | 205.7 |

|

||

| | | | | | | | | |

|

||

| [YOLOv5n6](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5n6.pt) | 1280 | 36.0 | 54.4 | 153 | 8.1 | 2.1 | 3.2 | 4.6 |

|

||

| [YOLOv5s6](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5s6.pt) | 1280 | 44.8 | 63.7 | 385 | 8.2 | 3.6 | 12.6 | 16.8 |

|

||

| [YOLOv5m6](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5m6.pt) | 1280 | 51.3 | 69.3 | 887 | 11.1 | 6.8 | 35.7 | 50.0 |

|

||

| [YOLOv5l6](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5l6.pt) | 1280 | 53.7 | 71.3 | 1784 | 15.8 | 10.5 | 76.8 | 111.4 |

|

||

| [YOLOv5x6](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5x6.pt)<br>+ [[TTA]](https://docs.ultralytics.com/yolov5/tutorials/test_time_augmentation/) | 1280<br>1536 | 55.0<br>**55.8** | 72.7<br>**72.7** | 3136<br>- | 26.2<br>- | 19.4<br>- | 140.7<br>- | 209.8<br>- |

|

||

|

||

<details>

|

||

<summary>表格说明</summary>

|

||

|

||

- 所有预训练权重均使用默认设置训练了 300 个周期。Nano (n) 和 Small (s) 模型使用 [hyp.scratch-low.yaml](https://github.com/ultralytics/yolov5/blob/master/data/hyps/hyp.scratch-low.yaml) 超参数,而 Medium (m)、Large (l) 和 Extra-Large (x) 模型使用 [hyp.scratch-high.yaml](https://github.com/ultralytics/yolov5/blob/master/data/hyps/hyp.scratch-high.yaml)。

|

||

- **mAP<sup>val</sup>** 值表示在 [COCO val2017 数据集](https://docs.ultralytics.com/datasets/detect/coco/)上的单模型、单尺度性能。<br>复现请使用:`python val.py --data coco.yaml --img 640 --conf 0.001 --iou 0.65`

|

||

- **速度**指标是在 [AWS p3.2xlarge V100 实例](https://aws.amazon.com/ec2/instance-types/p4/)上对 COCO val 图像进行平均测量的。不包括非极大值抑制 (NMS) 时间(约 1 毫秒/图像)。<br>复现请使用:`python val.py --data coco.yaml --img 640 --task speed --batch 1`

|

||

- **TTA** ([测试时增强](https://docs.ultralytics.com/yolov5/tutorials/test_time_augmentation/)) 包括反射和尺度增强以提高准确性。<br>复现请使用:`python val.py --data coco.yaml --img 1536 --iou 0.7 --augment`

|

||

|

||

</details>

|

||

|

||

## 🖼️ 分割

|

||

|

||

YOLOv5 [v7.0 版本](https://github.com/ultralytics/yolov5/releases/v7.0) 引入了[实例分割](https://docs.ultralytics.com/tasks/segment/)模型,达到了业界顶尖的性能。这些模型设计用于轻松训练、验证和部署。有关完整详细信息,请参阅[发布说明](https://github.com/ultralytics/yolov5/releases/v7.0),并探索 [YOLOv5 分割 Colab 笔记本](https://github.com/ultralytics/yolov5/blob/master/segment/tutorial.ipynb)以获取快速入门示例。

|

||

|

||

<details>

|

||

<summary>分割预训练权重</summary>

|

||

|

||

<div align="center">

|

||

<a align="center" href="https://www.ultralytics.com/yolo" target="_blank">

|

||

<img width="800" src="https://user-images.githubusercontent.com/61612323/204180385-84f3aca9-a5e9-43d8-a617-dda7ca12e54a.png" alt="YOLOv5 分割性能图表"></a>

|

||

</div>

|

||

|

||

YOLOv5 分割模型在 [COCO 数据集](https://docs.ultralytics.com/datasets/segment/coco/)上使用 A100 GPU 以 640 像素的图像大小训练了 300 个周期。模型导出为 [ONNX](https://onnx.ai/) FP32 用于 CPU 速度测试,导出为 [TensorRT](https://developer.nvidia.com/tensorrt) FP16 用于 GPU 速度测试。所有速度测试均在 Google [Colab Pro](https://colab.research.google.com/signup) 笔记本上进行,以确保可复现性。

|

||

|

||

| 模型 | 尺寸<br><sup>(像素) | mAP<sup>box<br>50-95 | mAP<sup>mask<br>50-95 | 训练时间<br><sup>300 周期<br>A100 (小时) | 速度<br><sup>ONNX CPU<br>(毫秒) | 速度<br><sup>TRT A100<br>(毫秒) | 参数<br><sup>(M) | FLOPs<br><sup>@640 (B) |

|

||

| ------------------------------------------------------------------------------------------ | ------------------- | -------------------- | --------------------- | ---------------------------------------- | ------------------------------- | ------------------------------- | ---------------- | ---------------------- |

|

||

| [YOLOv5n-seg](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5n-seg.pt) | 640 | 27.6 | 23.4 | 80:17 | **62.7** | **1.2** | **2.0** | **7.1** |

|

||

| [YOLOv5s-seg](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5s-seg.pt) | 640 | 37.6 | 31.7 | 88:16 | 173.3 | 1.4 | 7.6 | 26.4 |

|

||

| [YOLOv5m-seg](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5m-seg.pt) | 640 | 45.0 | 37.1 | 108:36 | 427.0 | 2.2 | 22.0 | 70.8 |

|

||

| [YOLOv5l-seg](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5l-seg.pt) | 640 | 49.0 | 39.9 | 66:43 (2x) | 857.4 | 2.9 | 47.9 | 147.7 |

|

||

| [YOLOv5x-seg](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5x-seg.pt) | 640 | **50.7** | **41.4** | 62:56 (3x) | 1579.2 | 4.5 | 88.8 | 265.7 |

|

||

|

||

- 所有预训练权重均使用 SGD 优化器,`lr0=0.01` 和 `weight_decay=5e-5`,在 640 像素的图像大小下,使用默认设置训练了 300 个周期。<br>训练运行记录在 [https://wandb.ai/glenn-jocher/YOLOv5_v70_official](https://wandb.ai/glenn-jocher/YOLOv5_v70_official)。

|

||

- **准确度**值表示在 COCO 数据集上的单模型、单尺度性能。<br>复现请使用:`python segment/val.py --data coco.yaml --weights yolov5s-seg.pt`

|

||

- **速度**指标是在 [Colab Pro A100 High-RAM 实例](https://colab.research.google.com/signup)上对 100 张推理图像进行平均测量的。值仅表示推理速度(NMS 约增加 1 毫秒/图像)。<br>复现请使用:`python segment/val.py --data coco.yaml --weights yolov5s-seg.pt --batch 1`

|

||

- **导出**到 ONNX (FP32) 和 TensorRT (FP16) 是使用 `export.py` 完成的。<br>复现请使用:`python export.py --weights yolov5s-seg.pt --include engine --device 0 --half`

|

||

|

||

</details>

|

||

|

||

<details>

|

||

<summary>分割使用示例 <a href="https://colab.research.google.com/github/ultralytics/yolov5/blob/master/segment/tutorial.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="在 Colab 中打开"></a></summary>

|

||

|

||

### 训练

|

||

|

||

YOLOv5 分割训练支持通过 `--data coco128-seg.yaml` 参数自动下载 [COCO128-seg 数据集](https://docs.ultralytics.com/datasets/segment/coco8-seg/)。对于完整的 [COCO-segments 数据集](https://docs.ultralytics.com/datasets/segment/coco/),请使用 `bash data/scripts/get_coco.sh --train --val --segments` 手动下载,然后使用 `python train.py --data coco.yaml` 进行训练。

|

||

|

||

```bash

|

||

# 在单个 GPU 上训练

|

||

python segment/train.py --data coco128-seg.yaml --weights yolov5s-seg.pt --img 640

|

||

|

||

# 使用多 GPU 分布式数据并行 (DDP) 进行训练

|

||

python -m torch.distributed.run --nproc_per_node 4 --master_port 1 segment/train.py --data coco128-seg.yaml --weights yolov5s-seg.pt --img 640 --device 0,1,2,3

|

||

```

|

||

|

||

### 验证

|

||

|

||

在 COCO 数据集上验证 YOLOv5s-seg 的掩码[平均精度均值 (mAP)](https://www.ultralytics.com/glossary/mean-average-precision-map):

|

||

|

||

```bash

|

||

# 下载 COCO 验证分割集 (780MB, 5000 张图像)

|

||

bash data/scripts/get_coco.sh --val --segments

|

||

|

||

# 验证模型

|

||

python segment/val.py --weights yolov5s-seg.pt --data coco.yaml --img 640

|

||

```

|

||

|

||

### 预测

|

||

|

||

使用预训练的 YOLOv5m-seg.pt 模型对 `bus.jpg` 执行分割:

|

||

|

||

```bash

|

||

# 运行预测

|

||

python segment/predict.py --weights yolov5m-seg.pt --source data/images/bus.jpg

|

||

```

|

||

|

||

```python

|

||

# 从 PyTorch Hub 加载模型(注意:推理支持可能有所不同)

|

||

model = torch.hub.load("ultralytics/yolov5", "custom", "yolov5m-seg.pt")

|

||

```

|

||

|

||

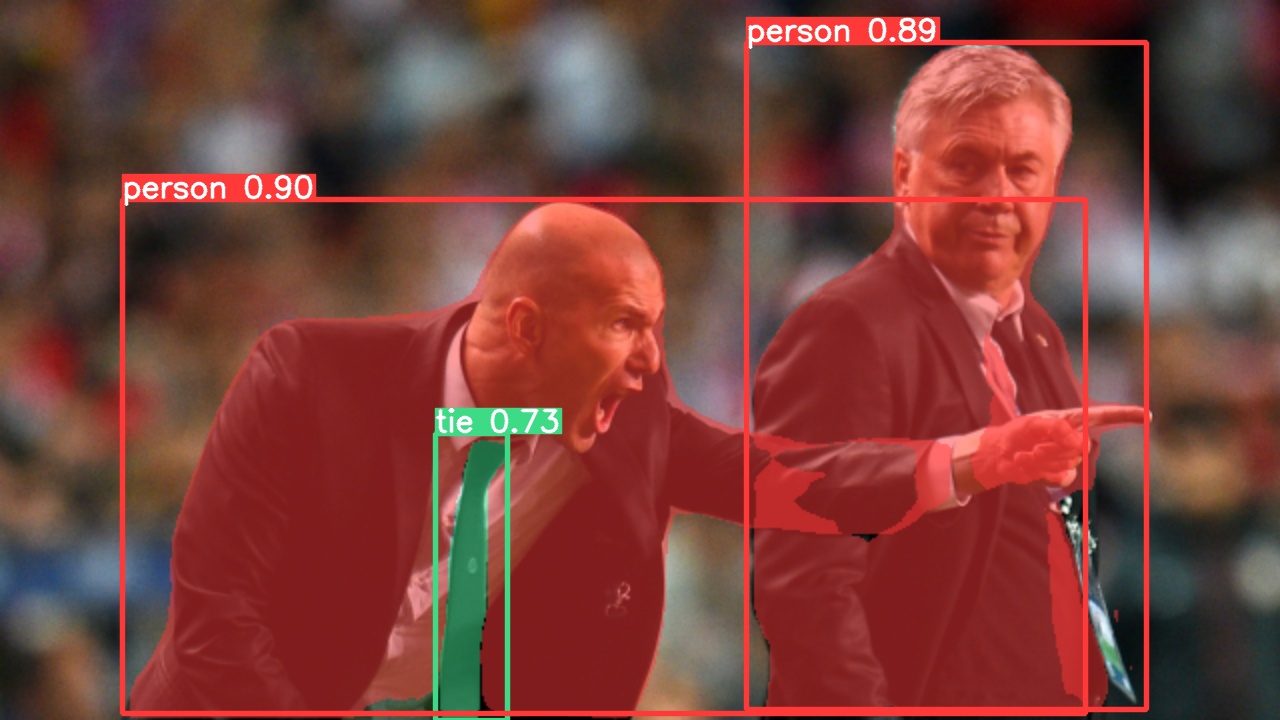

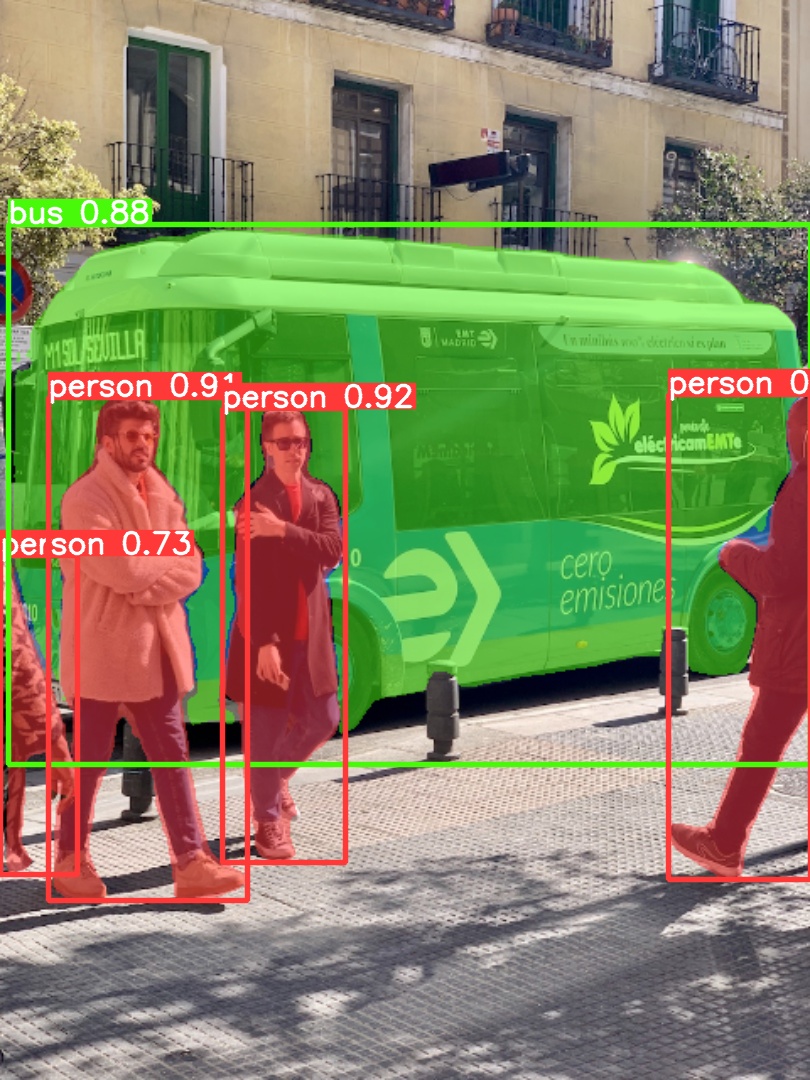

|  |  |

|

||

| :-----------------------------------------------------------------------------------------------------------------------: | :--------------------------------------------------------------------------------------------------------------------: |

|

||

|

||

### 导出

|

||

|

||

将 YOLOv5s-seg 模型导出为 ONNX 和 TensorRT 格式:

|

||

|

||

```bash

|

||

# 导出模型

|

||

python export.py --weights yolov5s-seg.pt --include onnx engine --img 640 --device 0

|

||

```

|

||

|

||

</details>

|

||

|

||

## 🏷️ 分类

|

||

|

||

YOLOv5 [v6.2 版本](https://github.com/ultralytics/yolov5/releases/v6.2) 引入了对[图像分类](https://docs.ultralytics.com/tasks/classify/)模型训练、验证和部署的支持。请查看[发布说明](https://github.com/ultralytics/yolov5/releases/v6.2)了解详细信息,并参阅 [YOLOv5 分类 Colab 笔记本](https://github.com/ultralytics/yolov5/blob/master/classify/tutorial.ipynb)获取快速入门指南。

|

||

|

||

<details>

|

||

<summary>分类预训练权重</summary>

|

||

|

||

<br>

|

||

|

||

YOLOv5-cls 分类模型在 [ImageNet](https://docs.ultralytics.com/datasets/classify/imagenet/) 上使用 4xA100 实例训练了 90 个周期。[ResNet](https://arxiv.org/abs/1512.03385) 和 [EfficientNet](https://arxiv.org/abs/1905.11946) 模型在相同设置下一起训练以进行比较。模型导出为 [ONNX](https://onnx.ai/) FP32(用于 CPU 速度测试)和 [TensorRT](https://developer.nvidia.com/tensorrt) FP16(用于 GPU 速度测试)。所有速度测试均在 Google [Colab Pro](https://colab.research.google.com/signup) 上运行,以确保可复现性。

|

||

|

||

| 模型 | 尺寸<br><sup>(像素) | 准确率<br><sup>top1 | 准确率<br><sup>top5 | 训练<br><sup>90 周期<br>4xA100 (小时) | 速度<br><sup>ONNX CPU<br>(毫秒) | 速度<br><sup>TensorRT V100<br>(毫秒) | 参数<br><sup>(M) | FLOPs<br><sup>@224 (B) |

|

||

| -------------------------------------------------------------------------------------------------- | ------------------- | ------------------- | ------------------- | ------------------------------------- | ------------------------------- | ------------------------------------ | ---------------- | ---------------------- |

|

||

| [YOLOv5n-cls](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5n-cls.pt) | 224 | 64.6 | 85.4 | 7:59 | **3.3** | **0.5** | **2.5** | **0.5** |

|

||

| [YOLOv5s-cls](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5s-cls.pt) | 224 | 71.5 | 90.2 | 8:09 | 6.6 | 0.6 | 5.4 | 1.4 |

|

||

| [YOLOv5m-cls](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5m-cls.pt) | 224 | 75.9 | 92.9 | 10:06 | 15.5 | 0.9 | 12.9 | 3.9 |

|

||

| [YOLOv5l-cls](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5l-cls.pt) | 224 | 78.0 | 94.0 | 11:56 | 26.9 | 1.4 | 26.5 | 8.5 |

|

||

| [YOLOv5x-cls](https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5x-cls.pt) | 224 | **79.0** | **94.4** | 15:04 | 54.3 | 1.8 | 48.1 | 15.9 |

|

||

| | | | | | | | | |

|

||

| [ResNet18](https://github.com/ultralytics/yolov5/releases/download/v7.0/resnet18.pt) | 224 | 70.3 | 89.5 | **6:47** | 11.2 | 0.5 | 11.7 | 3.7 |

|

||

| [ResNet34](https://github.com/ultralytics/yolov5/releases/download/v7.0/resnet34.pt) | 224 | 73.9 | 91.8 | 8:33 | 20.6 | 0.9 | 21.8 | 7.4 |

|

||

| [ResNet50](https://github.com/ultralytics/yolov5/releases/download/v7.0/resnet50.pt) | 224 | 76.8 | 93.4 | 11:10 | 23.4 | 1.0 | 25.6 | 8.5 |

|

||

| [ResNet101](https://github.com/ultralytics/yolov5/releases/download/v7.0/resnet101.pt) | 224 | 78.5 | 94.3 | 17:10 | 42.1 | 1.9 | 44.5 | 15.9 |

|

||

| | | | | | | | | |

|

||

| [EfficientNet_b0](https://github.com/ultralytics/yolov5/releases/download/v7.0/efficientnet_b0.pt) | 224 | 75.1 | 92.4 | 13:03 | 12.5 | 1.3 | 5.3 | 1.0 |

|

||

| [EfficientNet_b1](https://github.com/ultralytics/yolov5/releases/download/v7.0/efficientnet_b1.pt) | 224 | 76.4 | 93.2 | 17:04 | 14.9 | 1.6 | 7.8 | 1.5 |

|

||

| [EfficientNet_b2](https://github.com/ultralytics/yolov5/releases/download/v7.0/efficientnet_b2.pt) | 224 | 76.6 | 93.4 | 17:10 | 15.9 | 1.6 | 9.1 | 1.7 |

|

||

| [EfficientNet_b3](https://github.com/ultralytics/yolov5/releases/download/v7.0/efficientnet_b3.pt) | 224 | 77.7 | 94.0 | 19:19 | 18.9 | 1.9 | 12.2 | 2.4 |

|

||

|

||

<details>

|

||

<summary>表格说明(点击展开)</summary>

|

||

|

||

- 所有预训练权重均使用 SGD 优化器,`lr0=0.001` 和 `weight_decay=5e-5`,在 224 像素的图像大小下,使用默认设置训练了 90 个周期。<br>训练运行记录在 [https://wandb.ai/glenn-jocher/YOLOv5-Classifier-v6-2](https://wandb.ai/glenn-jocher/YOLOv5-Classifier-v6-2)。

|

||

- **准确度**值(top-1 和 top-5)表示在 [ImageNet-1k 数据集](https://docs.ultralytics.com/datasets/classify/imagenet/)上的单模型、单尺度性能。<br>复现请使用:`python classify/val.py --data ../datasets/imagenet --img 224`

|

||

- **速度**指标是在 Google [Colab Pro V100 High-RAM 实例](https://colab.research.google.com/signup)上对 100 张推理图像进行平均测量的。<br>复现请使用:`python classify/val.py --data ../datasets/imagenet --img 224 --batch 1`

|

||

- **导出**到 ONNX (FP32) 和 TensorRT (FP16) 是使用 `export.py` 完成的。<br>复现请使用:`python export.py --weights yolov5s-cls.pt --include engine onnx --imgsz 224`

|

||

|

||

</details>

|

||

</details>

|

||

|

||

<details>

|

||

<summary>分类使用示例 <a href="https://colab.research.google.com/github/ultralytics/yolov5/blob/master/classify/tutorial.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="在 Colab 中打开"></a></summary>

|

||

|

||

### 训练

|

||

|

||

YOLOv5 分类训练支持使用 `--data` 参数自动下载诸如 [MNIST](https://docs.ultralytics.com/datasets/classify/mnist/)、[Fashion-MNIST](https://docs.ultralytics.com/datasets/classify/fashion-mnist/)、[CIFAR10](https://docs.ultralytics.com/datasets/classify/cifar10/)、[CIFAR100](https://docs.ultralytics.com/datasets/classify/cifar100/)、[Imagenette](https://docs.ultralytics.com/datasets/classify/imagenette/)、[Imagewoof](https://docs.ultralytics.com/datasets/classify/imagewoof/) 和 [ImageNet](https://docs.ultralytics.com/datasets/classify/imagenet/) 等数据集。例如,使用 `--data mnist` 开始在 MNIST 上训练。

|

||

|

||

```bash

|

||

# 使用 CIFAR-100 数据集在单个 GPU 上训练

|

||

python classify/train.py --model yolov5s-cls.pt --data cifar100 --epochs 5 --img 224 --batch 128

|

||

|

||

# 在 ImageNet 数据集上使用多 GPU DDP 进行训练

|

||

python -m torch.distributed.run --nproc_per_node 4 --master_port 1 classify/train.py --model yolov5s-cls.pt --data imagenet --epochs 5 --img 224 --device 0,1,2,3

|

||

```

|

||

|

||

### 验证

|

||

|

||

在 ImageNet-1k 验证数据集上验证 YOLOv5m-cls 模型的准确性:

|

||

|

||

```bash

|

||

# 下载 ImageNet 验证集 (6.3GB, 50,000 张图像)

|

||

bash data/scripts/get_imagenet.sh --val

|

||

|

||

# 验证模型

|

||

python classify/val.py --weights yolov5m-cls.pt --data ../datasets/imagenet --img 224

|

||

```

|

||

|

||

### 预测

|

||

|

||

使用预训练的 YOLOv5s-cls.pt 模型对图像 `bus.jpg` 进行分类:

|

||

|

||

```bash

|

||

# 运行预测

|

||

python classify/predict.py --weights yolov5s-cls.pt --source data/images/bus.jpg

|

||

```

|

||

|

||

```python

|

||

# 从 PyTorch Hub 加载模型

|

||

model = torch.hub.load("ultralytics/yolov5", "custom", "yolov5s-cls.pt")

|

||

```

|

||

|

||

### 导出

|

||

|

||

将训练好的 YOLOv5s-cls、ResNet50 和 EfficientNet_b0 模型导出为 ONNX 和 TensorRT 格式:

|

||

|

||

```bash

|

||

# 导出模型

|

||

python export.py --weights yolov5s-cls.pt resnet50.pt efficientnet_b0.pt --include onnx engine --img 224

|

||

```

|

||

|

||

</details>

|

||

|

||

## ☁️ 环境

|

||

|

||

使用我们预配置的环境快速开始。点击下面的图标查看设置详情。

|

||

|

||

<div align="center">

|

||

<a href="https://bit.ly/yolov5-paperspace-notebook" title="在 Paperspace Gradient 上运行">

|

||

<img src="https://github.com/ultralytics/assets/releases/download/v0.0.0/logo-gradient.png" width="10%" /></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="5%" alt="" />

|

||

<a href="https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb" title="在 Google Colab 中打开">

|

||

<img src="https://github.com/ultralytics/assets/releases/download/v0.0.0/logo-colab-small.png" width="10%" /></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="5%" alt="" />

|

||

<a href="https://www.kaggle.com/models/ultralytics/yolov5" title="在 Kaggle 中打开">

|

||

<img src="https://github.com/ultralytics/assets/releases/download/v0.0.0/logo-kaggle-small.png" width="10%" /></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="5%" alt="" />

|

||

<a href="https://hub.docker.com/r/ultralytics/yolov5" title="拉取 Docker 镜像">

|

||

<img src="https://github.com/ultralytics/assets/releases/download/v0.0.0/logo-docker-small.png" width="10%" /></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="5%" alt="" />

|

||

<a href="https://docs.ultralytics.com/yolov5/environments/aws_quickstart_tutorial/" title="AWS 快速入门指南">

|

||

<img src="https://github.com/ultralytics/assets/releases/download/v0.0.0/logo-aws-small.png" width="10%" /></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="5%" alt="" />

|

||

<a href="https://docs.ultralytics.com/yolov5/environments/google_cloud_quickstart_tutorial/" title="GCP 快速入门指南">

|

||

<img src="https://github.com/ultralytics/assets/releases/download/v0.0.0/logo-gcp-small.png" width="10%" /></a>

|

||

</div>

|

||

|

||

## 🤝 贡献

|

||

|

||

我们欢迎您的贡献!让 YOLOv5 变得易于访问和有效是社区的共同努力。请参阅我们的[贡献指南](https://docs.ultralytics.com/help/contributing/)开始。通过 [YOLOv5 调查](https://www.ultralytics.com/survey?utm_source=github&utm_medium=social&utm_campaign=Survey)分享您的反馈。感谢所有为使 YOLOv5 变得更好而做出贡献的人!

|

||

|

||

[](https://github.com/ultralytics/yolov5/graphs/contributors)

|

||

|

||

## 📜 许可证

|

||

|

||

Ultralytics 提供两种许可选项以满足不同需求:

|

||

|

||

- **AGPL-3.0 许可证**:一种 [OSI 批准的](https://opensource.org/license/agpl-v3)开源许可证,非常适合学术研究、个人项目和测试。它促进开放协作和知识共享。详情请参阅 [LICENSE](https://github.com/ultralytics/yolov5/blob/master/LICENSE) 文件。

|

||

- **企业许可证**:专为商业应用量身定制,此许可证允许将 Ultralytics 软件和 AI 模型无缝集成到商业产品和服务中,绕过 AGPL-3.0 的开源要求。对于商业用例,请通过 [Ultralytics 授权许可](https://www.ultralytics.com/license)联系我们。

|

||

|

||

## 📧 联系

|

||

|

||

对于与 YOLOv5 相关的错误报告和功能请求,请访问 [GitHub Issues](https://github.com/ultralytics/yolov5/issues)。对于一般问题、讨论和社区支持,请加入我们的 [Discord 服务器](https://discord.com/invite/ultralytics)!

|

||

|

||

<br>

|

||

<div align="center">

|

||

<a href="https://github.com/ultralytics"><img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-github.png" width="3%" alt="Ultralytics GitHub"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="3%" alt="space">

|

||

<a href="https://www.linkedin.com/company/ultralytics/"><img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-linkedin.png" width="3%" alt="Ultralytics LinkedIn"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="3%" alt="space">

|

||

<a href="https://twitter.com/ultralytics"><img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-twitter.png" width="3%" alt="Ultralytics Twitter"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="3%" alt="space">

|

||

<a href="https://youtube.com/ultralytics?sub_confirmation=1"><img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-youtube.png" width="3%" alt="Ultralytics YouTube"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="3%" alt="space">

|

||

<a href="https://www.tiktok.com/@ultralytics"><img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-tiktok.png" width="3%" alt="Ultralytics TikTok"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="3%" alt="space">

|

||

<a href="https://ultralytics.com/bilibili"><img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-bilibili.png" width="3%" alt="Ultralytics BiliBili"></a>

|

||

<img src="https://github.com/ultralytics/assets/raw/main/social/logo-transparent.png" width="3%" alt="space">

|

||

<a href="https://discord.com/invite/ultralytics"><img src="https://github.com/ultralytics/assets/raw/main/social/logo-social-discord.png" width="3%" alt="Ultralytics Discord"></a>

|

||

</div>

|